|

|

Post by swamprat on Mar 22, 2019 15:43:44 GMT

It's simple; matter ages better...

Physicists reveal why matter dominates our universe

Date: March 21, 2019

Source: Syracuse University

Summary:

Physicists have confirmed that matter and antimatter decay differently for elementary particles containing charmed quarks.

Physicists in the College of Arts and Sciences at Syracuse University have confirmed that matter and antimatter decay differently for elementary particles containing charmed quarks.

Distinguished Professor Sheldon Stone says the findings are a first, although matter-antimatter asymmetry has been observed before in particles with strange quarks or beauty quarks.

He and members of the College's High-Energy Physics (HEP) research group have measured, for the first time and with 99.999-percent certainty, a difference in the way D0mesons and anti-D0 mesons transform into more stable byproducts.

Mesons are subatomic particles composed of one quark and one antiquark, bound together by strong interactions.

"There have been many attempts to measure matter-antimatter asymmetry, but, until now, no one has succeeded," says Stone, who collaborates on the Large Hadron Collider beauty (LHCb) experiment at the CERN laboratory in Geneva, Switzerland. "It's a milestone in antimatter research."

The findings may also indicate new physics beyond the Standard Model, which describes how fundamental particles interact with one another. "Till then, we need to await theoretical attempts to explain the observation in less esoteric means," he adds.

Every particle of matter has a corresponding antiparticle, identical in every way, but with an opposite charge. Precision studies of hydrogen and antihydrogen atoms, for example, reveal similarities to beyond the billionth decimal place.

When matter and antimatter particles come into contact, they annihilate each other in a burst of energy -- similar to what happened in the Big Bang, some 14 billion years ago.

"That's why there is so little naturally occurring antimatter in the Universe around us," says Stone, a Fellow of the American Physical Society, which has awarded him this year's W.K.H. Panofsky Prize in Experimental Particle Physics.

The question on Stone's mind involves the equal-but-opposite nature of matter and antimatter. "If the same amount of matter and antimatter exploded into existence at the birth of the Universe, there should have been nothing left behind but pure energy. Obviously, that didn't happen," he says in a whiff of understatement.

Thus, Stone and his LHCb colleagues have been searching for subtle differences in matter and antimatter to understand why matter is so prevalent.

The answer may lie at CERN, where scientists create antimatter by smashing protons together in the Large Hadron Collider (LHC), the world's biggest, most powerful particular accelerator. The more energy the LHC produces, the more massive are the particles -- and antiparticles -- formed during collision.

It is in the debris of these collisions that scientists such as Ivan Polyakov, a postdoc in Syracuse's HEP group, hunt for particle ingredients.

"We don't see antimatter in our world, so we have to artificially produce it," he says. "The data from these collisions enables us to map the decay and transformation of unstable particles into more stable byproducts."

HEP is renowned for its pioneering research into quarks -- elementary particles that are the building blocks of matter. There are six types, or flavors, of quarks, but scientists usually talk about them in pairs: up/down, charm/strange and top/bottom. Each pair has a corresponding mass and fractional electronic charge.

In addition to the beauty quark (the "b" in "LHCb"), HEP is interested in the charmed quark. Despite its relatively high mass, a charmed quark lives a fleeting existence before decaying into something more stable.

Recently, HEP studied two versions of the same particle. One version contained a charmed quark and an antimatter version of an up quark, called the anti-up quark. The other version had an anti-charm quark and an up quark.

Using LHC data, they identified both versions of the particle, well into the tens of millions, and counted the number of times each particle decayed into new byproducts.

"The ratio of the two possible outcomes should have been identical for both sets of particles, but we found that the ratios differed by about a tenth of a percent," Stone says. "This proves that charmed matter and antimatter particles are not totally interchangeable."

Adds Polyakov, "Particles might look the same on the outside, but they behave differently on the inside. That is the puzzle of antimatter."

The idea that matter and antimatter behaves differently is not new. Previous studies of particles with strange quarks and bottom quarks have confirmed as such.

What makes this study unique, Stone concludes, is that it is the first time anyone has witnessed particles with charmed quarks being asymmetrical: "It's one for the history books."

HEP's work is supported by the National Science Foundation.

Story Source:

Materials provided by Syracuse University. Note: Content may be edited for style and length.

www.sciencedaily.com/releases/2019/03/190321130309.htm

Conclusion: How one matures and grows old is important.....

|

|

|

|

Post by swamprat on Mar 26, 2019 15:58:58 GMT

Navy Files Patent on Room-Temperature Superconductivity

A long-term technological holy grail is room-temperature superconductivity. Normal electrical conductors have resistance, and convert part of the electrical energy that flows through them to heat, which is lost. Superconductivity, a consequence of quantum mechanics, allows an electrical current to flow without any resistance at all, and would allow efficient transmission of electricity over long distances, more efficient motors, and magnetic levitation for devices such as high speed vehicles.

Superconductivity was discovered experimentally in 1911, but was not explained theoretically until 1957 by Bardeen, Cooper, and Schrieffer, who shared the 1972 Nobel Prize in physics for their theory. The early superconductors required very low temperatures to operate: on the order of the temperature of liquid helium (around 4° K). It is very expensive to produce liquid helium and keep it liquid: while liquid nitrogen costs about as much as milk; liquid helium costs as much as Scotch whisky.

In 1986 two researchers at IBM Zürich, Georg Bednorz and K. Alex Müller, discovered that some ceramic materials became superconductive at around the temperature of liquid nitrogen (77° K). They immediately won the Nobel Prize for this discovery the next year, but to this day there is no satisfactory theory for how this high-temperature superconductivity works—it is a major unsolved problem in theoretical physics.

Milk is a lot cheaper than Scotch (you can buy as much liquid nitrogen as you wish at your local welding supply shop—just bring a thermos), and there are substantial technological applications of this phenomenon even though we haven’t a clue how or why it works.

But still, liquid nitrogen is messy to deal with. The ideal would be a superconductor that worked at room temperature without the need for refrigeration. That’s something you could potentially use to replace copper and aluminum wire in power lines and all kinds of electrical equipment, permitting transmission of power without loss and waste heat. So far, this has eluded everybody who has attempted to discover it.

Illustration of the room-temperature superconductor design described in the U.S. Navy's patent application. Credit: U.S. Patent and Trademark Office

On 2019-02-21 a U.S Patent Application, US 2019/0058105 A1 “Piezoelectricity-Induced Room Temperature Superconductor” [PDF], was filed on behalf of the US. Navy which claims that by abruptly vibrating a conductor by means of pulsed power it is possible to achieve room-temperature superconductivity? The patent application modestly notes,

The achievement of room temperature superconductivity (RTSC) represents a highly disruptive technology, capable of a total paradigm change in Science and Technology, rather than just a paradigm shift. Hence, its military and commercial value is considerable.

There is a great deal of speculation in the patent application as to the mechanism which might cause the electron pairing that produces the superconductivity, but there is no specific claim of a mechanism. No experimental data are presented to substantiate the claim of superconductivity.

Ratburger member and physicist Jack Sarfatti’s quick take, in an E-mail to his list of correspondents, is:

This seems consistent with my Frohlich pump proposal.

www.cosmosandhistory.org/index.php/journal/article/view/690

“An electromagnetic coil is circumferentially positioned around the coating such that when the coil is activated with a pulsed current; a non-linear vibration is induced, enabling room temperature superconductivity.”

The pulsed current coil is the resonant Frohlich pump.

The effective non-equilibrium temperature of the pulsed device is

T’ = T/(1 + k(pulsed current power)]

T is the ambient thermodynamic equilibrium temperature when the pulse is switched off.

Applying the pulse lowers the effective temperature to the critical temperature Tc for the onset of superconductivity (macro-quantum coherence).

Read more at:

phys.org/news/2019-02-navy-patent-room-temperature-superconductor.html

|

|

|

|

Post by swamprat on Apr 18, 2019 15:03:44 GMT

And then what happens when your brain gets hacked.......?

Interesting, since there is some thinking about alien life possibly being a combination of an intelligent species AND artificial intelligence......

Heads in the cloud: Scientists predict internet of thoughts 'within decades'

A 'human brain/cloud interface' will give people instant access to vast knowledge and computing power via thought alone, predict experts

Date: April 12, 2019

Source: Frontiers

Summary:

Researchers predict that exponential progress in nanotechnology, nanomedicine, artificial intelligence, and computation will lead this century to the development of a 'human brain/cloud interface' (B/CI), that connects neurons and synapses in the brain to vast cloud-computing networks in real time.

Imagine a future technology that would provide instant access to the world's knowledge and artificial intelligence, simply by thinking about a specific topic or question. Communications, education, work, and the world as we know it would be transformed.

Writing in Frontiers in Neuroscience, an international collaboration led by researchers at UC Berkeley and the US Institute for Molecular Manufacturing predicts that exponential progress in nanotechnology, nanomedicine, AI, and computation will lead this century to the development of a "Human Brain/Cloud Interface" (B/CI), that connects neurons and synapses in the brain to vast cloud-computing networks in real time.

Nanobots on the brain

The B/CI concept was initially proposed by futurist-author-inventor Ray Kurzweil, who suggested that neural nanorobots -- brainchild of Robert Freitas, Jr., senior author of the research -- could be used to connect the neocortex of the human brain to a "synthetic neocortex" in the cloud. Our wrinkled neocortex is the newest, smartest, 'conscious' part of the brain.

Freitas' proposed neural nanorobots would provide direct, real-time monitoring and control of signals to and from brain cells.

"These devices would navigate the human vasculature, cross the blood-brain barrier, and precisely autoposition themselves among, or even within brain cells," explains Freitas. "They would then wirelessly transmit encoded information to and from a cloud-based supercomputer network for real-time brain-state monitoring and data extraction."

The internet of thoughts

This cortex in the cloud would allow "Matrix"-style downloading of information to the brain, the group claims.

"A human B/CI system mediated by neuralnanorobotics could empower individuals with instantaneous access to all cumulative human knowledge available in the cloud, while significantly improving human learning capacities and intelligence," says lead author Dr. Nuno Martins.

B/CI technology might also allow us to create a future "global superbrain" that would connect networks of individual human brains and AIs to enable collective thought.

"While not yet particularly sophisticated, an experimental human 'BrainNet' system has already been tested, enabling thought-driven information exchange via the cloud between individual brains," explains Martins. "It used electrical signals recorded through the skull of 'senders' and magnetic stimulation through the skull of 'receivers,' allowing for performing cooperative tasks.

"With the advance of neuralnanorobotics, we envisage the future creation of 'superbrains' that can harness the thoughts and thinking power of any number of humans and machines in real time. This shared cognition could revolutionize democracy, enhance empathy, and ultimately unite culturally diverse groups into a truly global society."

When can we connect?

According to the group's estimates, even existing supercomputers have processing speeds capable of handling the necessary volumes of neural data for B/CI -- and they're getting faster, fast.

Rather, transferring neural data to and from supercomputers in the cloud is likely to be the ultimate bottleneck in B/CI development.

"This challenge includes not only finding the bandwidth for global data transmission," cautions Martins, "but also, how to enable data exchange with neurons via tiny devices embedded deep in the brain."

One solution proposed by the authors is the use of 'magnetoelectric nanoparticles' to effectively amplify communication between neurons and the cloud.

"These nanoparticles have been used already in living mice to couple external magnetic fields to neuronal electric fields -- that is, to detect and locally amplify these magnetic signals and so allow them to alter the electrical activity of neurons," explains Martins. "This could work in reverse, too: electrical signals produced by neurons and nanorobots could be amplified via magnetoelectric nanoparticles, to allow their detection outside of the skull."

Getting these nanoparticles -- and nanorobots -- safely into the brain via the circulation, would be perhaps the greatest challenge of all in B/CI.

"A detailed analysis of the biodistribution and biocompatibility of nanoparticles is required before they can be considered for human development. Nevertheless, with these and other promising technologies for B/CI developing at an ever-increasing rate, an 'internet of thoughts' could become a reality before the turn of the century," Martins concludes.

Story Source:

Materials provided by Frontiers. Note: Content may be edited for style and length.

Journal Reference:

1. Nuno R. B. Martins, Amara Angelica, Krishnan Chakravarthy, Yuriy Svidinenko, Frank J. Boehm, Ioan Opris, Mikhail A. Lebedev, Melanie Swan, Steven A. Garan, Jeffrey V. Rosenfeld, Tad Hogg, Robert A. Freitas. Human Brain/Cloud Interface. Frontiers in Neuroscience, 2019; 13 DOI: 10.3389/fnins.2019.00112

|

|

|

|

Post by swamprat on May 5, 2019 15:34:34 GMT

Richard Feynman would be so proud!

Antimatter quantum interferometry makes its debut

05 May 2019 Belle Dumé

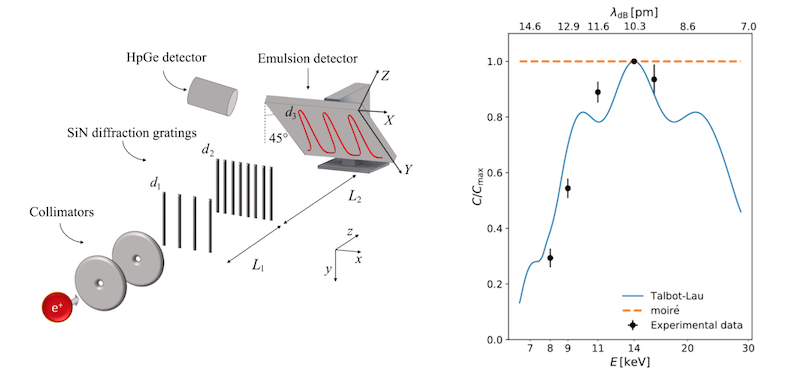

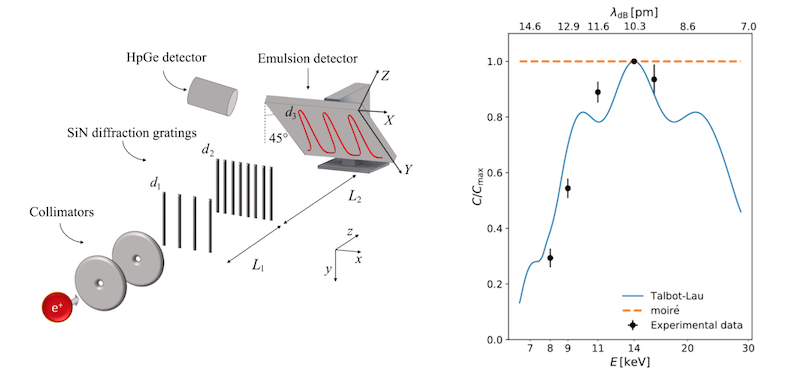

Left, schematics of the apparatus (positron beam, collimators, SiN gratings and emulsion detector. A HpGe detector is used as beam monitor). Right, single-particle interference visibility as a function of the positron energy is in agreement with quantum mechanics (blue) and disagrees with classical physics (orange dashed). Courtesy: Politecnico di Milano

Researchers in Italy and Switzerland have performed the first ever double-slit-like experiment on antimatter using a Talbot-Lau interferometer and a positron beam.

The classic double-slit experiment confirmed that light and matter have the characteristics of both waves and particles, a duality that was first put forward by de Broglie in 1923. This superposition principle is one of the main postulates of quantum mechanics and researchers have since been able to diffract and interfere matter waves of objects of increasing complexity – from electrons to neutrons and molecules.

The QUPLAS (QUantum Interferometry and Gravitation with Positrons and LAsers) collaboration, which includes researchers from the Politecnico di Milano L-NESS in Como, the Milan unit of the Istituto Nazionale di Fisica Nucleare (INFN), the Università degli Studi di Milano and the University of Bern, has now performed the first interference experiment on positrons – the antimatter equivalent of electrons.

“The experiment was first proposed for electrons by Albert Einstein and Richard Feynman as a thought experiment and realized by Merli, Missiroli and Pozzi in 1976 and more systematically by Tonomura and colleagues in 1989,” explains QUPLAS spokesman Marco Giammarchi of the INFN. “In this original experiment, which was voted by Physics World as the most beautiful experiment, the researchers demonstrated the specifically quantum effect of single particle interference, which – according to Feynman – is the central ‘mystery’ of quantum theory.”

Talbot-Lau interferometer

In the new version, Giammarchi and colleagues made use of a modified version of a period-magnifying two-grating “Talbot-Lau” interferometer. This device contains material diffraction gratings and produces high-contrast periodic fringes.

“This interferometer operates in the intermediate field region (as opposed to the far-field in the classic Merli-Missiroli-Pozzi experiment),” explains Giammarchi. “Here, particle trajectories become waves, so that interference starts to build up from two (or more) neighbouring slits because of the wave nature of the particles. We say it is magnifying because it enhances the interference pattern from the micron-scale of the gratings to up to six microns at the detector thanks to its unequally spaced gratings. This allows us to detect the interference pattern produced.”

The device is made of gold-coated 700-nm-thick silicon nitride gratings with periodicities of (1.210 ± 0.001) μm (d1) and (1.004 ± 0.001) μm (d2) to produce a periodic interference pattern d3 of (5.90 ± 0.04) μm.

Five interference patterns

The researchers detected the fringes in the interference pattern using a sub-micron resolution device known as a nuclear emulsion detector (made in Bern), which works as a photographic film since it contains silver bromide crystals embedded in a 50-mm-thick gelatine matrix. They observed five interference patterns at energies of 8, 9, 11, 14 and 16 keV.

“We tuned our system to provide maximum visibility at 14 keV, so the pattern visibility varies with respect to energy,” says Giammarchi. “This variation is a purely quantum mechanical effect and comes from the fact that a change in energy entails a change in the de Broglie wavelength.

“This visibility dependence as a function of energy is predicted by quantum theory and cannot be explained by classical physics,” he tells Physics World. “The visibility behaviour we have observed agrees with the theory prediction.”

In their experiments, the researchers used positrons from the variable energy positron beam facility at L-NESS (the Laboratory for Nanostructure Epitaxy and Spintronics on Silicon) in Como. They implanted positrons (e+) from the beta decay of a 22Na radioactive source on a monocrystalline tungsten film, where they were emitted with a kinetic energy of about 3 eV. They then accelerated slow positrons up to 16 keV using an electrostatic system to create a monochromatic and continuous positron beam that has an energy spread of less than 0.1%. The positrons are emitted at a rate of around 5 x 103 e+/s and the beam can be tuned to a spot with a focal size that is roughly several millimetres at full width at half maximum (FWHM).

Testing the weak equivalence principle and the CPT theorem

The new work heralds the beginning of the field of antimatter quantum interferometry and the main application for the technique will be to explore antimatter neutral systems such as antihydrogen, which is the antimatter equivalent of hydrogen and contains a positron and an antiproton. These studies could allow researchers to measure the gravitational properties of antimatter (does it fall up or down, for example?). Such measurements are of fundamental importance for testing the weak equivalence principle (which could have far-reaching consequences for cosmology) and the CPT theorem (which says that the laws of physics remain the same if the charge, parity and time-reversal properties of a particle are inverted together).

“Violation of these principles could show that the Standard Model of particle physics is incomplete or hold the key to our understanding of the mystery of matter-antimatter asymmetry in the universe,” explains Giammarchi.

The Como-Milan-Bern team will now be looking to build up the positronium beam at L-NESS so that it can start making such measurements. “A side application of what we have achieved could be to study decoherence with antimatter systems for the first time.”

physicsworld.com/a/antimatter-quantum-interferometry-makes-its-debut/

PS: If you are over 60 or under 30 and understand all this, you are weird.

PSS: Where are the Americans? Oh, wait! That's right! Our government is more interested in free handouts than in science!

|

|

|

|

Post by swamprat on May 23, 2019 16:45:02 GMT

Scientists break record for highest-temperature superconductor

Experiment produces new material that can conduct electricity perfectly

Date: May 22, 2019

Source: University of Chicago

Summary:

An international research team of scientists has discovered superconductivity -- the ability to conduct electricity perfectly -- at the highest temperatures ever recorded.

Lanthanum (periodic table symbol). Credit: © concept w / Adobe Stock

University of Chicago scientists are part of an international research team that has discovered superconductivity -- the ability to conduct electricity perfectly -- at the highest temperatures ever recorded.

Using advanced technology at UChicago-affiliated Argonne National Laboratory, the team studied a class of materials in which they observed superconductivity at temperatures of about minus-23 degrees Celsius (minus-9 degrees Fahrenheit) -- a jump of about 50 degrees compared to the previous confirmed record.

Though the superconductivity happened under extremely high pressure, the result still represents a big step toward creating superconductivity at room temperature -- the ultimate goal for scientists to be able to use this phenomenon for advanced technologies. The results were published May 23 in the journal Nature; Vitali Prakapenka, a research professor at the University of Chicago, and Eran Greenberg, a postdoctoral scholar at the University of Chicago, are co-authors of the research.

Just as a copper wire conducts electricity better than a rubber tube, certain kinds of materials are better at becoming superconductive, a state defined by two main properties: The material offers zero resistance to electrical current and cannot be penetrated by magnetic fields. The potential uses for this are as vast as they are exciting: electrical wires without diminishing currents, extremely fast supercomputers and efficient magnetic levitation trains.

But scientists have previously only been able to create superconducting materials when they are cooled to extremely cold temperatures -- initially, minus-240 degrees Celsius and more recently about minus-73 degrees Celsius. Since such cooling is expensive, it has limited their applications in the world at large.

Recent theoretical predictions have shown that a new class of materials of superconducting hydrides could pave the way for higher-temperature superconductivity. Researchers at the Max Planck Institute for Chemistry in Germany teamed up with University of Chicago researchers to create one of these materials, called lanthanum superhydrides, test its superconductivity, and determine its structure and composition.

The only catch was that the material needed to be placed under extremely high pressure -- between 150 and 170 gigapascals, more than one and a half million times the pressure at sea level. Only under these high-pressure conditions did the material -- a tiny sample only a few microns across -- exhibit superconductivity at the new record temperature.

In fact, the material showed three of the four characteristics needed to prove superconductivity: It dropped its electrical resistance, decreased its critical temperature under an external magnetic field and showed a temperature change when some elements were replaced with different isotopes. The fourth characteristic, called the Meissner effect, in which the material expels any magnetic field, was not detected. That's because the material is so small that this effect could not be observed, researchers said.

They used the Advanced Photon Source at Argonne National Laboratory, which provides ultra-bright, high-energy X-ray beams that have enabled breakthroughs in everything from better batteries to understanding the Earth's deep interior, to analyze the material. In the experiment, researchers within University of Chicago's Center for Advanced Radiation Sources squeezed a tiny sample of the material between two tiny diamonds to exert the pressure needed, then used the beamline's X-rays to probe its structure and composition.

Because the temperatures used to conduct the experiment is within the normal range of many places in the world, that makes the ultimate goal of room temperature -- or at least 0 degrees Celsius -- seem within reach.

The team is already continuing to collaborate to find new materials that can create superconductivity under more reasonable conditions.

"Our next goal is to reduce the pressure needed to synthesize samples, to bring the critical temperature closer to ambient, and perhaps even create samples that could be synthesized at high pressures, but still superconduct at normal pressures," Prakapenka said. "We are continuing to search for new and interesting compounds that will bring us new, and often unexpected, discoveries."

Story Source:

Materials provided by University of Chicago. Original written by Emily Ayshford. Note: Content may be edited for style and length.

Related Multimedia:

• Images of new superconducting material: news.uchicago.edu/story/scientists-break-record-highest-temperature-superconductor

Journal Reference:

1. A. P. Drozdov, P. P. Kong, V. S. Minkov, S. P. Besedin, M. A. Kuzovnikov, S. Mozaffari, L. Balicas, F. F. Balakirev, D. E. Graf, V. B. Prakapenka, E. Greenberg, D. A. Knyazev, M. Tkacz, M. I. Eremets. Superconductivity at 250 K in lanthanum hydride under high pressures. Nature, 2019; 569 (7757): 528 DOI: 10.1038/s41586-019-1201-8

www.sciencedaily.com/releases/2019/05/190522141823.htm

|

|

|

|

Post by swamprat on Jun 2, 2019 0:43:43 GMT

Sheldon Cooper says , "Geology isn't a science." But what does HE know!

Thanks for this Cliff!

Geology In

Heavy Metal Found in Meteoroids Kills Cancer Cells

Researches | 1 June 2019 6:25 PM

Rare metal from the asteroid that killed the dinosaurs can cure cancer, says professor

Iridium—the world’s second densest metal—can kill cancer cells by filling them with a deadly version of oxygen, while leaving healthy tissue unharmed.

First discovered in 1803, iridium gets its name comes from the Latin for “rainbow.” Hard, brittle, and yellow, the metal comes from the same family as platinum and is the world’s most corrosion-resistant metal.

Iridium is rare on Earth, but is abundant in meteoroids—and large amounts of iridium have been discovered in the Earth’s crust from around 66 million years ago, leading to the theory that it came to this planet with an asteroid which caused the extinction of the dinosaurs.

The researchers created a compound of iridium and organic material, which they can directly target towards cancerous cells, transferring energy to the cells to turn the oxygen (O2) inside them into singlet oxygen, which is poisonous and kills the cell—without harming any healthy tissue.

“This project is a leap forward in understanding how these new iridium-based anti-cancer compounds are attacking cancer cells, introducing different mechanisms of action to get around the resistance issue and tackle cancer from a different angle,” says study coauthor Cookson Chiu, a postgraduate researcher in the chemistry department at the University of Warwick.

Shining visible laser light through the skin onto the cancerous area triggers the process—this reaches the light-reactive coating of the compound and activates the metal to start filling the cancer with singlet oxygen.

Photochemotherapy—using laser light to target cancer—is fast emerging as a viable, effective, and non-invasive treatment. Patients are becoming increasingly resistant to traditional therapies, so it is vital to establish new pathways like this for fighting the disease.

The researchers found that after attacking a model tumor of lung cancer cells, which the researchers grew in the laboratory to form a tumor-like sphere, with red laser light (which can penetrate deeply through the skin), the activated organic-iridium compound penetrated and infused into every layer of the tumor to kill it—demonstrating how effective and far-reaching this treatment is.

They also proved that the method is safe to healthy cells by conducting the treatment on non-cancerous tissue and finding it had no effect.

“Our innovative approach to tackle cancer involving targeting important cellular proteins can lead to novel drugs with new mechanisms of action. These are urgently needed,” says Pingyu Zhang, a fellow in the chemistry department at the University of Warwick.

Furthermore, the researchers used state-of-the-art ultra-high resolution mass spectrometry to gain an unprecedented view of the individual proteins within the cancer cells—allowing them to determine precisely which proteins are attacked by the organic-iridium compound.

“Remarkable advances in modern mass spectrometry now allow us to analyze complex mixtures of proteins in cancer cells and pinpoint drug targets, on instruments that are sensitive enough to weigh even a single electron!” says Peter O’Connor, professor of analytical chemistry.

After analyzing huge amounts of data—thousands of proteins from the model cancer cells—they concluded that the iridium compound damaged the proteins for heat shock stress and glucose metabolism, both known as key molecules in cancer.

“The precious metal platinum is already used in more than 50 percent of cancer chemotherapies. The potential of other precious metals such as iridium to provide new targeted drugs which attack cancer cells in completely new ways and combat resistance, and which can be used safely with the minimum of side-effects, is now being explored,” says Peter Sadler, whose lab is in the department of chemistry at the University of Warwick.

Sadler adds: “It’s certainly now time to try to make good medical use of the iridium delivered to us by an asteroid 66 million years ago!”

www.geologyin.com/2017/12/heavy-metal-found-in-meteoroids-kills.html#KT0xWck66JpPhSG5.99

|

|

|

|

Post by swamprat on Jun 7, 2019 19:49:39 GMT

|

|

|

|

Post by swamprat on Jun 8, 2019 14:33:00 GMT

Aaahh, the infatuation of physicists! We gotta build that bigger collider!

Physicists Search for Monstrous Higgs Particle. It Could Seal the Fate of the Universe.

By Paul Sutter, Science & Astronomy

June 8, 2019

A subatomic particle illustration. (Image: © Shutterstock)

We all know and love the Higgs boson — which to physicists' chagrin has been mistakenly tagged in the media as the "God particle" — a subatomic particle first spotted in the Large Hadron Collider (LHC) back in 2012. That particle is a piece of a field that permeates all of space-time; it interacts with many particles, like electrons and quarks, providing those particles with mass, which is pretty cool.

But the Higgs that we spotted was surprisingly lightweight. According to our best estimates, it should have been a lot heavier. This opens up an interesting question: Sure, we spotted a Higgs boson, but was that the only Higgs boson? Are there more floating around out there doing their own things?

Though we don't have any evidence yet of a heavier Higgs, a team of researchers based at the LHC, the world's largest atom smasher, is digging into that question as we speak. And there's talk that as protons are smashed together inside the ring-shaped collider, hefty Higgs and even Higgs particles made up of various types of Higgs could come out of hiding.

If the heavy Higgs does indeed exist, then we need to reconfigure our understanding of the Standard Model of particle physics with the newfound realization that there's much more to the Higgs than meets the eye. And within those complex interactions, there might be a clue to everything from the mass of the ghostly neutrino particle to the ultimate fate of the universe.

All about the boson

Without the Higgs boson, pretty much the whole Standard Model comes crashing down. But to talk about the Higgs boson, we first need to understand how the Standard Model views the universe.

In our best conception of the subatomic world using the Standard Model, what we think of as particles aren't actually very important. Instead, there are fields. These fields permeate and soak up all of space and time. There is one field for each kind of particle. So, there's a field for electrons, a field for photons, and so on and so on. What you think of as particles are really local little vibrations in their particular fields. And when particles interact (by, say, bouncing off of each other), it's really the vibrations in the fields that are doing a very complicated dance.

The Higgs boson has a special kind of field. Like the other fields, it permeates all of space and time, and it also gets to talk and play with everybody else's fields.

But the Higgs' field has two very important jobs to do that can't be achieved by any other field.

Its first job is to talk to the W and Z bosons (via their respective fields), the carriers of the weak nuclear force. By talking to these other bosons, the Higgs is able to give them mass and make sure that they stay separated from the photons, the carriers of electromagnetic force. Without the Higgs boson running interference, all these carriers would be merged together and those two forces would merge together.

The other job of the Higgs boson is to talk to other particles, like electrons; through these conversations, it also gives them mass. This all works out nicely, because we have no other way of explaining the masses of these particles.

Light and heavy

This was all worked out in the 1960s through a series of complicated but assuredly elegant math, but there's just one tiny hitch to the theory: There's no real way to predict the exact mass of the Higgs boson. In other words, when you go looking for the particle (which is the little local vibration of the much larger field) in a particle collider, you don't know exactly what and where you're going to find it.

In 2012, scientists at the LHC announced the discovery of the Higgs boson after finding a few of the particles that represent the Higgs' field had been produced when protons were smashed into one another at near light-speed. These particles had a mass of 125 gigaelectronvolts (GeV), or about the equivalent of 125 protons — so it's kind of heavy but not incredibly huge.

At first glance, all that sounds fine. Physicists didn't really have a firm prediction for the mass of the Higgs boson, so it could be whatever it wanted to be; we happened to find the mass within the energy range of the LHC. Break out the bubbly, and let's start celebrating.

Except that there are some hesitant, kind-of-sort-of half-predictions about the mass of the Higgs boson based on the way it interacts with yet another particle, the top quark. Those calculations predict a number way higher than 125 GeV. It could just be that those predictions are wrong, but then we have to circle back to the math and figure out where things are going haywire. Or the mismatch between broad predictions and the reality of what was found inside the LHC could mean that there's more to the Higgs boson story.

Huge Higgs

There very well could be a whole plethora of Higgs bosons out there that are too heavy for us to see with our current generation of particle colliders. (The mass-energy thing goes back to Einstein's famous E=mc^2 equation, which shows that energy is mass and mass is energy. The higher a particle's mass, the more energy it has and the more energy it takes to create that hefty thing.)

In fact, some speculative theories that push our knowledge of physics beyond the Standard Model do predict the existence of these heavy Higgs bosons. The exact nature of these additional Higgs characters depends on the theory, of course, ranging anywhere from simply one or two extra-heavy Higgs fields to even composite structures made of multiple different kinds of Higgs bosons stuck together.

Theorists are hard at work trying to find any possible way to test these theories, since most of them are simply inaccessible to current experiments. In a recent paper submitted to the Journal of High Energy Physics, and published online in the preprint journal arXiv, a team of physicists has advanced a proposal to search for the existence of more Higgs bosons, based on the peculiar way the particles might decay into lighter, more easily-recognizable particles, such as electrons, neutrinos and photons. However, these decays are extremely rare, so that while we can in principle find them with the LHC, it will take many more years of searching to collect enough data.

When it comes to the heavy Higgs, we're just going to have to be patient.

www.space.com/giant-higgs-fate-of-universe.html

|

|

|

|

Post by swamprat on Jun 22, 2019 14:54:33 GMT

We're getting there, people! Where will he/it be in another 100 years?!

First-ever successful mind-controlled robotic arm without brain implants

Date: June 19, 2019

Source: College of Engineering, Carnegie Mellon University

Summary:

Researchers have made a breakthrough in the field of noninvasive robotic device control. Using a noninvasive brain-computer interface (BCI), researchers have developed the first-ever successful mind-controlled robotic arm exhibiting the ability to continuously track and follow a computer cursor.

A team of researchers from Carnegie Mellon University, in collaboration with the University of Minnesota, has made a breakthrough in the field of noninvasive robotic device control. Using a noninvasive brain-computer interface (BCI), researchers have developed the first-ever successful mind-controlled robotic arm exhibiting the ability to continuously track and follow a computer cursor.

Being able to noninvasively control robotic devices using only thoughts will have broad applications, in particular benefiting the lives of paralyzed patients and those with movement disorders.

BCIs have been shown to achieve good performance for controlling robotic devices using only the signals sensed from brain implants. When robotic devices can be controlled with high precision, they can be used to complete a variety of daily tasks. Until now, however, BCIs successful in controlling robotic arms have used invasive brain implants. These implants require a substantial amount of medical and surgical expertise to correctly install and operate, not to mention cost and potential risks to subjects, and as such, their use has been limited to just a few clinical cases.

A grand challenge in BCI research is to develop less invasive or even totally noninvasive technology that would allow paralyzed patients to control their environment or robotic limbs using their own "thoughts." Such noninvasive BCI technology, if successful, would bring such much needed technology to numerous patients and even potentially to the general population.

However, BCIs that use noninvasive external sensing, rather than brain implants, receive "dirtier" signals, leading to current lower resolution and less precise control. Thus, when using only the brain to control a robotic arm, a noninvasive BCI doesn't stand up to using implanted devices. Despite this, BCI researchers have forged ahead, their eye on the prize of a less- or non-invasive technology that could help patients everywhere on a daily basis.

Bin He, Trustee Professor and Department Head of Biomedical Engineering at Carnegie Mellon University, is achieving that goal, one key discovery at a time.

"There have been major advances in mind controlled robotic devices using brain implants. It's excellent science," says He. "But noninvasive is the ultimate goal. Advances in neural decoding and the practical utility of noninvasive robotic arm control will have major implications on the eventual development of noninvasive neurorobotics."

Using novel sensing and machine learning techniques, He and his lab have been able to access signals deep within the brain, achieving a high resolution of control over a robotic arm. With noninvasive neuroimaging and a novel continuous pursuit paradigm, He is overcoming the noisy EEG signals leading to significantly improve EEG-based neural decoding, and facilitating real-time continuous 2D robotic device control.

Using a noninvasive BCI to control a robotic arm that's tracking a cursor on a computer screen, for the first time ever, He has shown in human subjects that a robotic arm can now follow the cursor continuously. Whereas robotic arms controlled by humans noninvasively had previously followed a moving cursor in jerky, discrete motions -- as though the robotic arm was trying to "catch up" to the brain's commands -- now, the arm follows the cursor in a smooth, continuous path.

In a paper published in Science Robotics, the team established a new framework that addresses and improves upon the "brain" and "computer" components of BCI by increasing user engagement and training, as well as spatial resolution of noninvasive neural data through EEG source imaging.

The paper, "Noninvasive neuroimaging enhances continuous neural tracking for robotic device control," shows that the team's unique approach to solving this problem not enhanced BCI learning by nearly 60% for traditional center-out tasks, it also enhanced continuous tracking of a computer cursor by over 500%.

The technology also has applications that could help a variety of people, by offering safe, noninvasive "mind control" of devices that can allow people to interact with and control their environments. The technology has, to date, been tested in 68 able-bodied human subjects (up to 10 sessions for each subject), including virtual device control and controlling of a robotic arm for continuous pursuit. The technology is directly applicable to patients, and the team plans to conduct clinical trials in the near future.

"Despite technical challenges using noninvasive signals, we are fully committed to bringing this safe and economic technology to people who can benefit from it," says He. "This work represents an important step in noninvasive brain-computer interfaces, a technology which someday may become a pervasive assistive technology aiding everyone, like smartphones."

This work was supported in part by the National Center for Complementary and Integrative Health, National Institute of Neurological Disorders and Stroke, National Institute of Biomedical Imaging and Bioengineering, and National Institute of Mental Health.

Story Source:

Materials provided by College of Engineering, Carnegie Mellon University. Original written by Emily Durham. Note: Content may be edited for style and length.

www.sciencedaily.com/releases/2019/06/190619142542.htm

|

|

|

|

Post by swamprat on Jul 19, 2019 16:04:31 GMT

Cyborg connection?

Elon Musk’s Neuralink unveils device to connect your brain to a smartphone.

Stephen Johnson

17 July, 2019

• Neuralink seeks to build a brain-machine interface that would connect human brains with computers.

• No tests have been performed in humans, but the company hopes to obtain FDA approval and begin human trials in 2020.

• Musk said the technology essentially provides humans the option of "merging with AI."

Elon Musk wants to create a brain-machine interface that helps humans "achieve a kind of symbiosis with artificial intelligence."

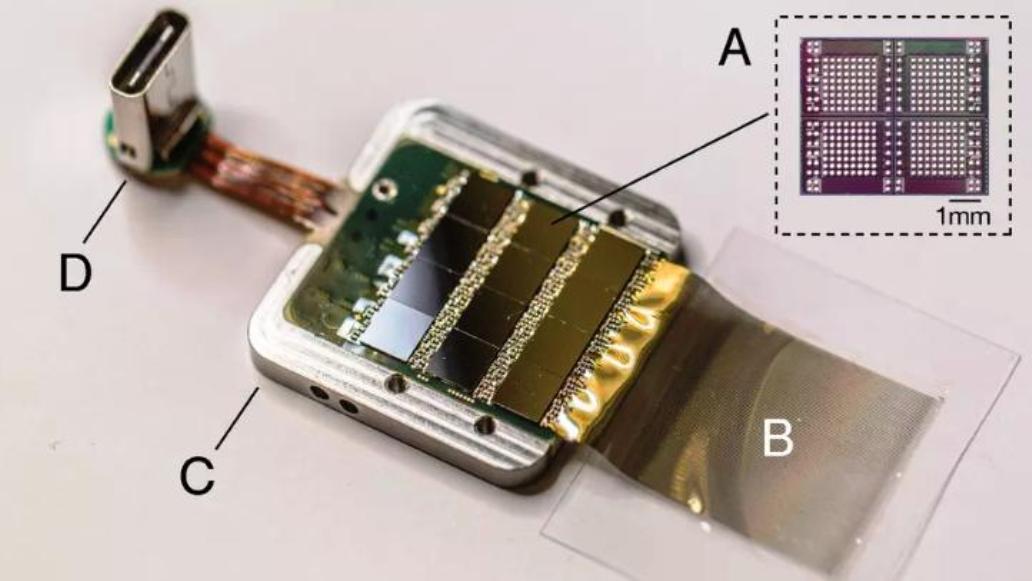

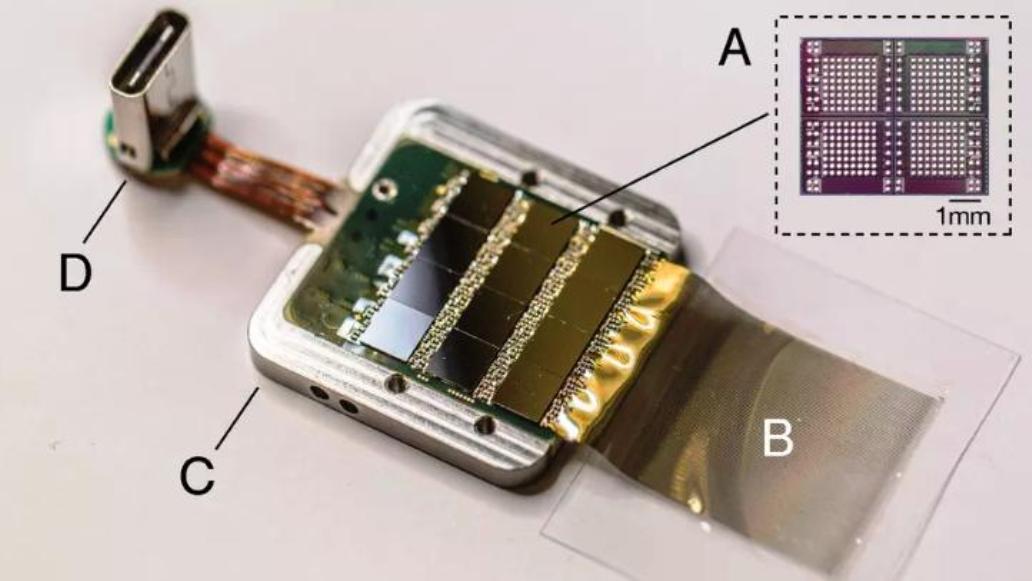

Neuralink — Musk's secretive company that's developing a brain-machine interface — gave a presentation Tuesday that outlined its first steps toward this building this technology, which it's been working on for the past two years. The main reveal? Flexible "threads" that record neuron activity, and a machine that inserts these threads into the brain.

The goal is to build an interface that enables someone's brain to control a smartphone or computer, and to make this process as safe and routine as Lasik surgery. Currently, Neuralink has only experimented on animals. In these experiments, the company used a surgical robot to embed into a rat brain a tiny probe with about 3,100 electrodes on some 100 flexible wires or "threads" — each of which is significantly smaller than a human hair.

Watch Musk's video: www.youtube.com/watch?time_continue=4&v=lA77zsJ31nA

This device can record the activity of neurons, which could help scientists learn more about the functions of the brain, specifically in the realm of disease and degenerative disorders. The device was also designed to stimulate brain cells, though a white paper released by the company said it has not yet done so.

"There's an incredible amount we can do to solve brain disorders, damage, and this will occur quite slowly," Musk said in the presentation Tuesday. "This will be a slow process where we'll gradually increase the issues that we solve until ultimately we can do a full brain-machine interface."

One of the most surprising revelations came when Musk said this device has been tested on at least one monkey, who was able to control a computer with its brain. (Musk didn't provide further details.) Neuralink's experiments involve embedding a probe into the animal's brain through invasive surgery with a sewing machine-like robot that drills holes into the skull. Once embedded, the company connects to the probe through USB.

Eventually, Neuralink hopes to use laser beams to embed the device, which would use a wireless interface, "so you have no wires poking out of your head," Musk said. "That's very important."

This wireless product — called the N1 sensor — would consist of four sensors implanted in the brain: three in motor areas and one in a somatosensory area, an area of the brain responsible for sensations inside of or on the body's surface. In its early stages, the N1 sensor would enable users to control smartphones with their brain, sort of like "learning to touch type [to] play the piano," Musk said.

Neuralink's device isn't the first example of a brain-machine interface, but the company claims its technology is "state of the art," mainly because it uses smaller and more flexible "threads" for neural recording, instead of rigid electrodes made from metal or semiconductors. The company suggests its approach would be safer and cause less inflammation in the brain.

"It has tremendous potential, and we hope to have this in a human patient by the end of next year," Musk said.

But before that can happen, Neuralink must first obtain FDA approval by establishing that its technology works safely and effectively in animals. It's also worth noting that, like some of Musk's other goals, Neuralink described its 2020 human-trials timeline as "aspirational."

Musk — who once said A.I. is humanity's "biggest existential threat" — suggested that it makes sense for humans to work toward merging with technology.

"Even in a benign AI scenario, we will be left behind," Musk said Tuesday. "With a high-bandwidth brain-machine interface, we can go along for the ride. We can effectively have the option of merging with AI."

bigthink.com/technology-innovation/neuralink-musk-presentation?rebelltitem=2#rebelltitem2

|

|

|

|

Post by swamprat on Jul 24, 2019 15:14:00 GMT

Vacuum solutions: it’s good to talk

24 Jul 2019 | Sponsored by Kurt J. Lesker Company

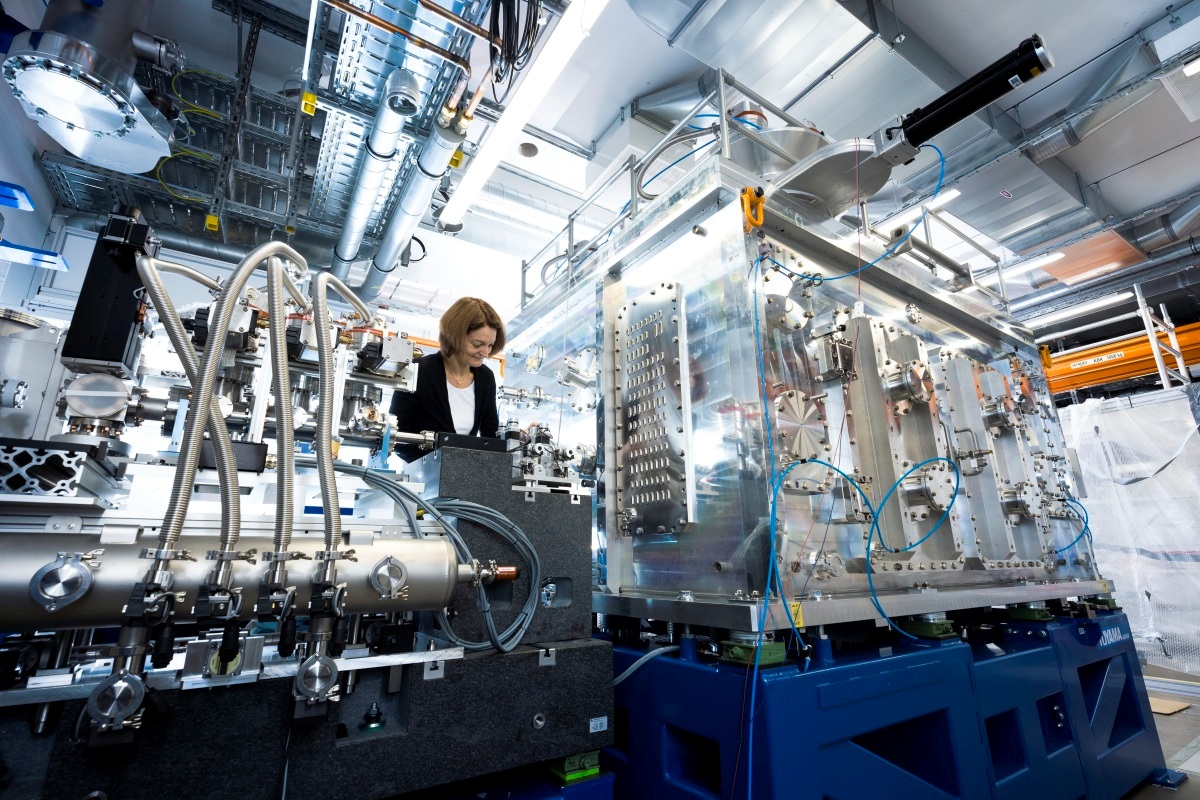

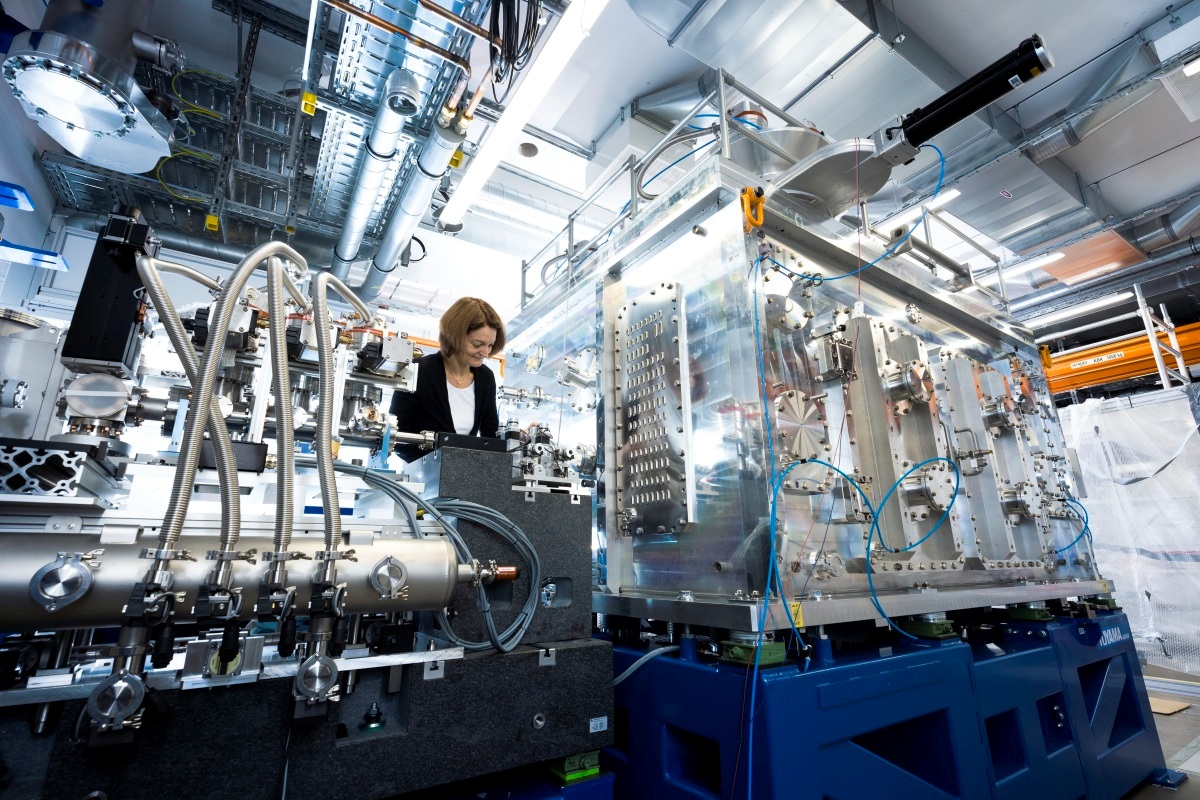

Under pressure: the HED experimental station will be used to study matter under extreme conditions, including new extreme-pressure phases, solid-density plasmas and phase transitions of complex solids in high magnetic fields. (Courtesy: European XFEL/Jan Hosan)

Like many big-science research facilities, the European X-ray Free Electron Laser (European XFEL) has the numbers to impress. The €1.2 bn facility, which is located in Hamburg, Germany, uses superconducting linear accelerator technology to generate 27,000 X-ray flashes per second, with a pulse duration of less than 100 fs and a brilliance that’s orders of magnitude greater than any other conventional X-ray source.

That unique radiation is put to work in the European XFEL’s underground experimental hall, where six scientific instruments enable international teams of researchers and industrial users to carry out a diverse programme of basic and applied materials research – from mapping the atomic details of cells, viruses and biomolecules to time-resolved investigations of chemical reactions and structural imaging of nanoelectronic materials.

Underpinning that collective endeavour and spanning the 3.4 km long facility (XFEL accelerator, X-ray beamlines and the experimental hall) are all manner of enabling vacuum technologies, including chambers and end-stations, CF flange systems, feedthroughs, sample manipulators, valves, pumps and a range of associated hardware and instrumentation.

Building the relationship

The European XFEL’s High-Energy Density (HED) instrument is a case in point. Here the XFEL’s ultrashort X-ray laser pulses enable fundamental studies of matter at extremes of temperature and pressure – simulating conditions in the interiors of large planets – and at extreme electric or magnetic field strengths.

“Scientists will use the HED instrument to investigate what happens to a material when it’s compressed to very high density and changes state from a solid to a plasma,” says Ian Thorpe, instrument engineer for the HED programme.

Back in May, Thorpe and his colleagues initiated a series of in-house experiments with the HED instrument – effectively a user-assisted commissioning programme to ensure that the set-up is fit for purpose ahead of full go-live later in the summer. “The first of these experiments successfully characterized the focus,” explains Thorpe. “This is critical because we’re working on very small samples and you get the highest detail and pressure if you focus the X-ray and optical laser beams into a very tight spot.”

In terms of its vacuum specifications, the HED instrument requires a mix of ultrahigh-vacuum and high-vacuum technologies for the X-ray optics/diagnostics enclosure and sample chamber. Many standard catalogue parts are available via the European XFEL’s online ordering system, with Kurt J. Lesker Company (KJLC) among the registered suppliers approved by the facility’s procurement department.

However, it’s KJLC’s capabilities in the manufacture and supply of custom vacuum parts, subsystems and chambers that sets the working relationship apart. None of the European XFEL’s demands are straightforward, and the delivery of custom vacuum orders relies on a robust feedback loop between manufacturer and customer.

In this way, product specialists and engineers at KJLC review the customer’s designs to fully understand the European XFEL’s technical requirements and scientific objectives. The design review, tighter tolerances, cleaning, vacuum test and bake-out are all part of that collaboration with KJLC.

Connected customers

Luis Lopez is a systems integration engineer on another European XFEL experiment, the Single Particles, Clusters and Biomolecules and Serial Femtosecond Crystallography (SPB/SFX) instrument. SPB/SFX is primarily focused on 3D diffractive imaging and structural dynamics (on timescales of milliseconds to femtoseconds) of biological samples such as macromolecules, viruses, organelles and cells.

Although the scientific and vacuum system requirements for SPB/SFX differ from the HED experiment, the SPB/SFX experimental team clearly values the same close working relationship with the KJLC manufacturing division. “We have a direct connection with the product specialists and engineers,” says Lopez. “On custom-made parts, that interaction is welcomed, with KJLC staff often coming up with alternative options, improvements and work-arounds to our original designs.”

physicsworld.com/a/vacuum-solutions-its-good-to-talk/

|

|

|

|

Post by gus on Jul 25, 2019 23:04:05 GMT

Just in. “The structure and composition of these materials are not from any known existing military or commercial application,” says Steve Justice, current COO of To The Stars Academy and former head of Advanced Systems at Lockheed Martin's “Skunk Works.” They’ve been collected from sources with varying levels of chain-of-custody documentation, so we are focusing on verifiable facts and working to develop independent scientific proof of the materials' properties and attributes. In some cases, the manufacturing technology required to fabricate the material is only now becoming available, but the material has been in documented possession since the mid-1990's. We currently have multiple material samples being analyzed by contracted laboratories and have plans to extend the scope of this study.” TTSA will also seek to engage the potential partners who have expressed interest in helping accelerate ADAM research and development. “If the claims associated with these assets can be validated and substantiated, then we can initiate work to transition them from being a technology to commercial and military capabilities,” adds Justice. “As noted in our October 2017 TTSA kickoff webcast, technologies that would allow us to engineer the spacetime metric would bring capabilities that would fundamentally alter civilization, with revolutionary changes to transportation, communication and computation.” Learn more at dpo.tothestarsacademy.com Read our offering circular here: bit.ly/2Sdy0cVto-the-stars-web-assets.s3.amazonaws.com/files/Reg%202%20Images/67308078_2511169395572051_7461262150623821824_o.jpg |

|

|

|

Post by swamprat on Jul 30, 2019 22:50:41 GMT

Ion Propulsion? This Sci-Fi Tech is Now a Reality

Boeing leads $3M round to boost Accion Systems’ electric space propulsion system

By Alan Boyle

This Boston-based startup company is specializing in some next-level futuristic space technology.

Accion Systems — whose stated mission is to “accelerate the exploration of space” — has developed a tiny (think the size of a postage stamp) new thruster capable of moving satellites around in space.

The stamp-sized thrusters use a non-toxic, ionic liquid propellant – and if that technology sounds familiar – it may be because ion propulsion has been popularized in science fiction books, TV shows and movies including the Star Trek series.

To help scale production of Accion’s new thruster and continue its innovative development, Boeing recently invested in the company through Boeing HorizonX Ventures – a division that seeks to assist the most promising and innovative aerospace startups.

Coming on the heels of their acquisition of Millennium Space Systems and investment in Bridge Sat. Inc., Boeing is continuing its commitment to building the most advanced satellites in the world.

An artist’s conception shows Accion Systems’ electrospray thruster chips (in gold) arranged in a propulsion array on a satellite. (Accion Systems Illustration / Zina Deretsky)

Boeing’s venture capital fund is leading a $3 million investment round for Accion Systems, a Boston-based startup whose electric propulsion system for satellites could get its next in-space test early as next month.

Joining Boeing HorizonX Ventures in the Series B round is GettyLab, a Bay Area venture fund focusing on innovations in science and technology.

Accion’s propulsion system is certainly innovative, and in line with the increased emphasis at Boeing and elsewhere on electric propulsion for space applications.

The Tiled Ionic Liquid Electrospray system, also known as TILE, makes use of a non-toxic, liquid propellant that’s pushed out, ion by ion, from arrays of thrusters the size of postage stamps. Ion drives are the stuff of science-fiction (as in “Star Trek”) as well as real-life space missions (such as NASA’s Dawn mission to Ceres). Accion’s TILE system is designed to be smaller, lighter and more cost-effective than traditional ion engines.

“Accion‘s scalable technology can help bring game-changing capabilities to satellites, space vehicles and customers,” Brian Schettler, managing director of Boeing HorizonX Ventures, said today in a news release. “Investing in startups with next-generation concepts accelerates satellite innovation, unlocking new possibilities and economics in Earth orbit and deep space.”

Boeing has been building satellites with electric propulsion for several years, but Accion’s TILE system should extend that capability to next-generation small satellites.

“Our TILE product family gives satellites greater capabilities, and at the size of a postage stamp, it fundamentally rewrites the relationship between mass and propulsion,” said Natalya Bailey, CEO and co-founder of the four-year-old MIT spinout. “Boeing’s aerospace leadership will help us deliver safer, higher performance next-generation propulsion systems to market for satellite and deep-space exploration applications.”

Accion has received annual contracts from the U.S. Department of Defense for the past three years, and the TILE system has already been tested in space twice. Two TILE-equipped, student-built nanosatellites belonging to California’s Irvine CubeSat STEM Program are due to be launched next month.

The Irvine 01 satellite is set to be sent into orbit by a Rocket Lab Electron rocket launching from New Zealand as part of a mission nicknamed “It’s Business Time.” Irvine 02 is one of the payloads scheduled for liftoff from California’s Vandenberg Air Force Base on a SpaceX Falcon 9 rocket, as part of Seattle-based Spaceflight’s dedicated riideshare mission.

The Irvine CubeSat STEM Program brings together more than 100 students from six high schools in California’s Tustin and Irvine School Districts. “Supporting efforts and inspiring students to get excited about STEM and space technology is an exercise in ‘paying it forward’ for all of us,” Bailey said when the project was announced in June.

Boeing HorizonX is the aerospace company’s channel for investing in advanced technologies with potential aerospace applications, ranging from 3-D printing to artificial intelligence.

www.geekwire.com/2018/boeing-leads-3m-round-boost-accion-systems-electric-space-propulsion-system/

|

|

|

|

Post by swamprat on Sept 17, 2019 15:14:34 GMT

That light bulb up there is a SUN! Albeit a weak one.....

Welcome indoors, solar cells

Wide-gap non-fullerene acceptor enabling high-performance organic photovoltaic cells for indoor applications

Date: September 16, 2019

Source: Linköping University

Summary:

Scientists have developed organic solar cells optimized to convert ambient indoor light to electricity. The power they produce is low, but is probably enough to feed the millions of products that the internet of things will bring online.

As the internet of things expands, it is expected that we will need to have millions of products online, both in public spaces and in homes. Many of these will be the multitude of sensors to detect and measure moisture, particle concentrations, temperature and other parameters. For this reason, the demand for small and cheap sources of renewable energy is increasing rapidly, in order to reduce the need for frequent and expensive battery replacements.

This is where organic solar cells come in. Not only are they flexible, cheap to manufacture and suitable for manufacture as large surfaces in a printing press, they have one further advantage: the light-absorbing layer consists of a mixture of donor and acceptor materials, which gives considerable flexibility in tuning the solar cells such that they are optimised for different spectra -- for light of different wavelengths.

Researchers in Beijing, China, led by Jianhui Hou, and Linköping, Sweden, led by Feng Gao, have now together developed a new combination of donor and acceptor materials, with a carefully determined composition, to be used as the active layer in an organic solar cell. The combination absorbs exactly the wavelengths of light that surround us in our living rooms, at the library and in the supermarket.

The researchers describe two variants of an organic solar cell in an article in Nature Energy, where one variant has an area of 1 cm2 and the other 4 cm2. The smaller solar cell was exposed to ambient light at an intensity of 1000 lux, and the researchers observed that as much as 26.1% of the energy of the light was converted to electricity. The organic solar cell delivered a high voltage of above 1 V for more than 1000 hours in ambient light that varied between 200 and 1000 lux. The larger solar cell still maintained an energy efficiency of 23%.

"This work indicates great promise for organic solar cells to be widely used in our daily life for powering the internet of things," says Feng Gao, senior lecturer in the Division of Biomolecular and Organic Electronics at Linköping University.

"We are confident that the efficiency of organic solar cells will be further improved for ambient light applications in coming years, because there is still a large room for optimization of the materials used in this work," Jianhui Hou, professor at the Institute of Chemistry, Chinese Academy of Sciences, underlines.

The result is a further advance in research within the field of organic solar cells. In the summer of 2018, for example, the scientists, together with colleagues from a number of other universities, published rules for the construction of efficient organic solar cells (see the link given below). The article collected 25 researchers from seven universities, and was published in Nature Materials. The research was led by Feng Gao. These rules have proven to be useful along the complete pathway to efficient solar cell for indoor use.

Spin off company

The Biomolecular and Organic Electronics research group at Linköping University, under the leadership of Olle Inganäs (now professor emeritus), has been for many years a world-leader in the field of organic solar cells. A few years ago, Olle Inganäs and his colleague Jonas Bergqvist, who is co-author of the articles in Nature Materials and Nature Energy, founded, and are now co-owners of a company, which focusses on commercialising solar cells for indoor use.

Story Source:

Materials provided by Linköping University. Note: Content may be edited for style and length.

Journal Reference:

1. Yong Cui, Yuming Wang, Jonas Bergqvist, Huifeng Yao, Ye Xu, Bowei Gao, Chenyi Yang, Shaoqing Zhang, Olle Inganäs, Feng Gao, Jianhui Hou. Wide-gap non-fullerene acceptor enabling high-performance organic photovoltaic cells for indoor applications. Nature Energy, 2019; 4 (9): 768 DOI: 10.1038/s41560-019-0448-5

|

|

|

|

Post by swamprat on Oct 5, 2019 19:29:16 GMT

Over the last few years, we've all seen comments about a material called "graphene". It is obviously an impressive material to people of science. But just what is it, exactly?

"Graphene is the future. Plain and simple.

It's 100 times stronger than steel, thinner than a sheet of paper, and more conductive than copper.

And that's not all...

Researchers the world over are using it for critical advances in a variety of industries. Graphene makes:

• Solar – 50x-100x more efficient

• Semiconductors – 50x-100x faster

• Aircraft – 70% lighter

We're talking batteries that charge 10x faster and store 10x more power...

Phones and computer displays that bend and fold...

And even the potential to make people and things completely invisible.

Indeed, the Huffington Post notes graphene will “change the world.”

It's so vital to our future that it's been named a "supply critical mineral" and a "strategic mineral" by the United States and the European Union."

And.....

Graphene (/ˈɡræfiːn/) is an ALLOTROPE of carbon in the form of a single layer of atoms in a two-dimensional hexagonal lattice in which one atom forms each vertex. It is the basic structural element of other allotropes, including graphite, charcoal, carbon nanotubes and fullerenes. It can also be considered as an indefinitely large aromatic molecule, the ultimate case of the family of flat polycyclic aromatic hydrocarbons.

ALLOTROPE: " The term allotrope refers to one or more forms of a chemical element that occur in the same physical state. The different forms arise from the different ways atoms may be bonded together. The concept of allotropes was proposed by Swedish scientist Jons Jakob Berzelius in 1841. The ability for elements to exist in this way is called allotropism.

Allotropes may display very different chemical and physical properties. For example, graphite and diamond are both allotropes of carbon that occur in the solid state. Graphite is soft, while diamond is extremely hard. Allotropes of phosphorus display different colors, such as red, yellow, and white. Elements may change allotropes in response to changes in pressure, temperature, and exposure to light."

Graphene has a special set of properties which set it apart from other elements. In proportion to its thickness, it is about 100 times stronger than the strongest steel. Yet its density is dramatically lower than any other steel, with a surfacic mass of 0.763mg per square meter. It conducts heat and electricity very efficiently and is nearly transparent. Graphene also shows a large and nonlinear diamagnetism, even greater than graphite, and can be levitated by Nd-Fe-B magnets. Researchers have identified the bipolar transistor effect, ballistic transport of charges and large quantum oscillations in the material.

Scientists have theorized about graphene for decades. It has likely been unknowingly produced in small quantities for centuries, through the use of pencils and other similar applications of graphite. It was originally observed in electron microscopes in 1962, but only studied while supported on metal surfaces. The material was later rediscovered, isolated and characterized in 2004 by Andre Geim and Konstantin Novoselov at the University of Manchester. Research was informed by existing theoretical descriptions of its composition, structure and properties. High-quality graphene proved to be surprisingly easy to isolate, making more research possible. This work resulted in the two winning the Nobel Prize in Physics in 2010 "for groundbreaking experiments regarding the two-dimensional material graphene."

The global market for graphene is reported to have reached $9 million by 2012, with most of the demand from research and development in semiconductor, electronics, battery energy and composites. That demand is anticipated to grow by leaps and bounds as more applications are refined. SOURCE: Wikipedia

Graphene turns 15 on track to deliver on its promises

Date: October 4, 2019

Source: Graphene Flagship

Summary:

Scientists analyze the current graphene landscape and market forecast for graphene over the following decade.Graphene is light, flexible, conductive, and one of the strongest materials in the world. And it is right on track to deliver on its promises -- the Graphene Flagship is confident many applications will be unveiled in the next decade. In a special Nature Nanotechnology issue, celebrating 15 years since the Nobel Prize-winning "ground-breaking experiments on graphene," the Graphene Flagship analysed the current graphene landscape and market forecast for graphene over the following decade.

In a world dominated by the immediacy of social media and digital technologies, it is hard to take a step back and think about how long materials take to develop. The silicon transistor, at the heart of all our beloved gadgets, was engineered in 1958. However, scientists had known of silicon for over 120 years -- it was discovered in 1824. Although expecting broad market penetration for graphene today would not be realistic, the truth is that one can already find graphene-enabled products on the market.

A number of these commercial applications have been enabled by the Graphene Flagship, a project funded by the European Commission that kicked off in 2013. Bringing together nearly 150 partners from 23 countries, it created the perfect breeding ground for innovation, which could not emerge without an intricate web of collaborations between academics, researchers, and industries. The Graphene Flagship also acted as inspiration for many programmes on graphene and related layered materials in many other countries.

The Graphene Flagship expects short-term applications in the materials sector, with graphene-enabled inks, composites, and coatings, for applications ranging from food packaging to textiles and sports goods. In the mid-term, graphene could be crucial for the energy sector, and market analyses agree on a high potential for graphene-enabled batteries and supercapacitors. With the first graphene-enabled solar farm to be installed in Crete next year, the Graphene Flagship will showcase how graphene can enable more sustainable energy generation, in line with Europe's commitment to renewable energies.

A host of applications for graphene are expected to hit the market 10 to 15 years from now. These are related to (opto)electronics, where graphene can deliver performances orders of magnitude higher than current technologies. The developments in this area could trigger the next-generation of (opto)electronic devices, bringing the 'more-than-Moore' devices to reality.

To secure its most valuable strength -- bridging the gap between basic and applied research -- the Graphene Flagship has also announced the creation of the first graphene foundry. With a budget of almost €20 million over four years, this experimental pilot line will pave the way towards commercially competitive graphene products, such as transceivers, photodetectors, and sensors. The Graphene Flagship foundry will also develop a process design kit: a set of 'instructions' to support product tape-out and guarantee that the finalised designs are high-quality and consistent. The foundry will be accessible by academia and industry stakeholders worldwide.

Kari Hjelt, Graphene Flagship Head of Innovation stated: "We are now seeing the first wave of graphene-enabled products on the market. The commercialisation activities of graphene are moving from materials development towards components and system level integration. In the future we will see a growing number of high-value add products for various application domains."

Thomas Reiss, Graphene Flagship Work Package Leader for Industrialisation, adds: "Key factors facilitating the further commercialisation of graphene comprise establishing innovation ecosystems and providing holistic innovation support. This includes elaborating innovation roadmaps and creating trust and confidence in graphene among industry by trusted validation and standardization services."

Andrea C. Ferrari, Graphene Flagship Science and Technology Officer and Chair of its Management Panel, concludes: "Graphene and related materials are progressing towards commercialization at the expected pace. The Graphene Flagship is not about hype, but about concrete and tangible results and progress. The Flagship Foundry will strengthen the EU position as world leader and pioneer in graphene technology and facilitate incorporation of graphene devices in various industries."

Story Source:

Materials provided by Graphene Flagship. Note: Content may be edited for style and length.

Journal Reference:

1. T. Reiss, K. Hjelt, A. C. Ferrari. Graphene is on track to deliver on its promises. Nature Nanotechnology, 2019; 14 (10): 907 DOI: 10.1038/s41565-019-0557-0

|

|