|

|

Post by swamprat on Apr 15, 2018 15:42:04 GMT

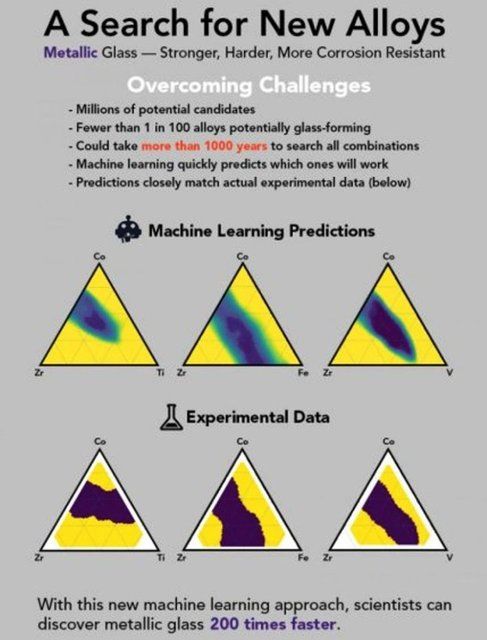

Someday, you may hear, "We're gonna replace the Brooklyn Bridge; and we're gonna make it out of GLASS!

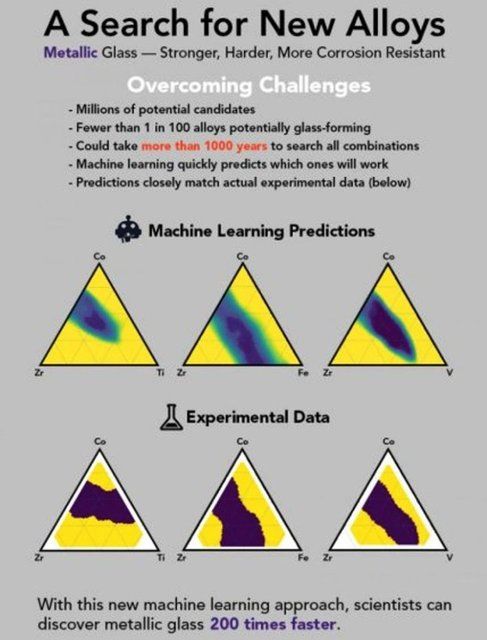

Artificial intelligence accelerates discovery of metallic glass

Machine learning algorithms pinpoint new materials 200 times faster than previously possible

Date: April 13, 2018

Source: Northwestern University

Summary: Combining artificial intelligence with experimentation sped up the discovery of metallic glass by 200 times. The new material's glassy nature makes it stronger, lighter and more corrosion-resistant than today's best steel.

With new, artificial intelligence approach, scientists discovered metallic glass 200 times faster than with an Edisonian approach. Credit: SLAC National Accelerator Laboratory

If you combine two or three metals together, you will get an alloy that usually looks and acts like a metal, with its atoms arranged in rigid geometric patterns.

But once in a while, under just the right conditions, you get something entirely new: a futuristic alloy called metallic glass. The amorphous material's atoms are arranged every which way, much like the atoms of the glass in a window. Its glassy nature makes it stronger and lighter than today's best steel, and it stands up better to corrosion and wear.

Although metallic glass shows a lot of promise as a protective coating and alternative to steel, only a few thousand of the millions of possible combinations of ingredients have been evaluated over the past 50 years, and only a handful developed to the point that they may become useful.

Now a group led by scientists at the Department of Energy's SLAC National Accelerator Laboratory, the National Institute of Standards and Technology (NIST) and Northwestern University has reported a shortcut for discovering and improving metallic glass -- and, by extension, other elusive materials -- at a fraction of the time and cost.

The research group took advantage of a system at SLAC's Stanford Synchrotron Radiation Lightsource (SSRL) that combines machine learning -- a form of artificial intelligence where computer algorithms glean knowledge from enormous amounts of data -- with experiments that quickly make and screen hundreds of sample materials at a time. This allowed the team to discover three new blends of ingredients that form metallic glass, and to do it 200 times faster than it could be done before.

The study was published today, April 13, in Science Advances.

"It typically takes a decade or two to get a material from discovery to commercial use," said Chris Wolverton, the Jerome B. Cohen Professor of Materials Science and Engineering in Northwestern's McCormick School of Engineering, who is an early pioneer in using computation and AI to predict new materials. "This is a big step in trying to squeeze that time down. You could start out with nothing more than a list of properties you want in a material and, using AI, quickly narrow the huge field of potential materials to a few good candidates."

The ultimate goal, said Wolverton, who led the paper's machine learning work, is to get to the point where a scientist can scan hundreds of sample materials, get almost immediate feedback from machine learning models and have another set of samples ready to test the next day -- or even within the hour.

Over the past half century, scientists have investigated about 6,000 combinations of ingredients that form metallic glass. Added paper co-author Apurva Mehta, a staff scientist at SSRL: "We were able to make and screen 20,000 in a single year."

Just getting started

While other groups have used machine learning to come up with predictions about where different kinds of metallic glass can be found, Mehta said, "The unique thing we have done is to rapidly verify our predictions with experimental measurements and then repeatedly cycle the results back into the next round of machine learning and experiments."

There's plenty of room to make the process even speedier, he added, and eventually automate it to take people out of the loop altogether so scientists can concentrate on other aspects of their work that require human intuition and creativity. "This will have an impact not just on synchrotron users, but on the whole materials science and chemistry community," Mehta said.

The team said the method will be useful in all kinds of experiments, especially in searches for materials like metallic glass and catalysts whose performance is strongly influenced by the way they're manufactured, and those where scientists don't have theories to guide their search. With machine learning, no previous understanding is needed. The algorithms make connections and draw conclusions on their own, which can steer research in unexpected directions.

"One of the more exciting aspects of this is that we can make predictions so quickly and turn experiments around so rapidly that we can afford to investigate materials that don't follow our normal rules of thumb about whether a material will form a glass or not," said paper co-author Jason Hattrick-Simpers, a materials research engineer at NIST. "AI is going to shift the landscape of how materials science is done, and this is the first step."

Experimenting with data

In the metallic glass study, the research team investigated thousands of alloys that each contain three cheap, nontoxic metals.

They started with a trove of materials data dating back more than 50 years, including the results of 6,000 experiments that searched for metallic glass. The team combed through the data with advanced machine learning algorithms developed by Wolverton and Logan Ward, a graduate student in Wolverton's laboratory who served as co-first author of the paper.

Based on what the algorithms learned in this first round, the scientists crafted two sets of sample alloys using two different methods, allowing them to test how manufacturing methods affect whether an alloy morphs into a glass. An SSRL x-ray beam scanned both sets of alloys, then researchers fed the results into a database to generate new machine learning results, which were used to prepare new samples that underwent another round of scanning and machine learning.

By the experiment's third and final round, Mehta said, the group's success rate for finding metallic glass had increased from one out of 300 or 400 samples tested to one out of two or three samples tested. The metallic glass samples they identified represented three different combinations of ingredients, two of which had never been used to make metallic glass before.

The study was funded by the US Department of Energy (award number FWP-100250), the Center for Hierarchical Materials Design and the National Institute of Standards and Technology (award number 70NANB14H012).

Story Source:

Materials provided by Northwestern University. Note: Content may be edited for style and length.

Journal Reference:

1. Fang Ren, Logan Ward, Travis Williams, Kevin J. Laws, Christopher Wolverton, Jason Hattrick-Simpers, Apurva Mehta. Accelerated discovery of metallic glasses through iteration of machine learning and high-throughput experiments. Science Advances, 2018; 4 (4): eaaq1566 DOI: 10.1126/sciadv.aaq1566

|

|

|

|

Post by swamprat on May 21, 2018 14:47:37 GMT

Elon Musk: ‘Mark my words — A.I. is far more dangerous than nukes’

Catherine Clifford | May 17, 2018

Tesla and SpaceX boss Elon Musk has doubled down on his dire warnings about the danger of artificial intelligence.

The billionaire tech entrepreneur called AI more dangerous than nuclear warheads and said there needs to be a regulatory body overseeing the development of super intelligence, speaking at the South by Southwest tech conference in Austin, Texas on Sunday.

It is not the first time Musk has made frightening predictions about the potential of artificial intelligence — he has, for example, called AI vastly more dangerous than North Korea — and he has previously called for regulatory oversight.

Some have called his tough talk fear-mongering. Facebook founder Mark Zuckerberg said Musk’s doomsday AI scenarios are unnecessary and “pretty irresponsible.” And Harvard professor Steven Pinker also recently criticized Musk’s tactics.

Musk, however, is resolute, calling those who push against his warnings “fools” at SXSW.

“The biggest issue I see with so-called AI experts is that they think they know more than they do, and they think they are smarter than they actually are,” said Musk. “This tends to plague smart people. They define themselves by their intelligence and they don’t like the idea that a machine could be way smarter than them, so they discount the idea — which is fundamentally flawed.”

Based on his knowledge of machine intelligence and its developments, Musk believes there is reason to be worried.

“I am really quite close, I am very close, to the cutting edge in AI and it scares the hell out of me,” said Musk. “It’s capable of vastly more than almost anyone knows and the rate of improvement is exponential.”

Musk pointed to machine intelligence playing the ancient Chinese strategy game Go to demonstrate rapid growth in AI’s capabilities. For example, London-based company, DeepMind, which was acquired by Google in 2014, developed an artificial intelligence system, AlphaGo Zero, that learned to play Go without any human intervention. It learned simply from randomized play against itself. The Alphabet-owned company announced this development in a paper published in October.

Musk worries AI’s development will outpace our ability to manage it in a safe way.

“So the rate of improvement is really dramatic. We have to figure out some way to ensure that the advent of digital super intelligence is one which is symbiotic with humanity. I think that is the single biggest existential crisis that we face and the most pressing one.”

To do this, Musk recommended the development of artificial intelligence be regulated.

“I am not normally an advocate of regulation and oversight — I think one should generally err on the side of minimizing those things — but this is a case where you have a very serious danger to the public,” said Musk.

“It needs to be a public body that has insight and then oversight to confirm that everyone is developing AI safely. This is extremely important. I think the danger of AI is much greater than the danger of nuclear warheads by a lot and nobody would suggest that we allow anyone to build nuclear warheads if they want. That would be insane,” he said at SXSW.

“And mark my words, AI is far more dangerous than nukes. Far. So why do we have no regulatory oversight? This is insane.”

Musk called for regulatory oversight of artificial intelligence in July too, speaking to the National Governors Association. “AI is a rare case where I think we need to be proactive in regulation than be reactive,” Musk said in July.

Elon Musk issues yet another warning against runaway artificial intelligence

In his analysis of the dangers of AI, Musk differentiates between case-specific applications of machine intelligence like self-driving cars and general machine intelligence, which he has described previously as having “an open-ended utility function” and having a “million times more compute power” than case-specific AI.

“I am not really all that worried about the short term stuff. Narrow AI is not a species-level risk. It will result in dislocation, in lost jobs,and better weaponry and that kind of thing, but it is not a fundamental species level risk, whereas digital super intelligence is,” explained Musk.

“So it is really all about laying the groundwork to make sure that if humanity collectively decides that creating digital super intelligence is the right move, then we should do so very very carefully — very very carefully. This is the most important thing that we could possibly do.”

Still, Musk is in the business of artificial intelligence with his venture Neuralink, a company working to create a way to connect the brain with machine intelligence.

Musk hopes “that we are able to achieve a symbiosis” with artificial intelligence: “We do want a close coupling between collective human intelligence and digital intelligence, and Neuralink is trying to help in that regard by trying creating a high bandwidth interface between AI and the human brain,” he said.

edu.proarch.top/?p=283

|

|

|

|

Post by swamprat on May 24, 2018 21:06:36 GMT

Will AI Ever Become Conscious?

By Mindy Weisberger, Senior Writer | May 24, 2018

When science-fiction worlds introduce robots that look and behave like people, sooner or later those worlds' inhabitants confront the question of robot self-awareness. If a machine is built to truly mimic a human, its "brain" must be complex enough not only to process information as ours does, but also to achieve certain types of abstract thinking that make us human. This includes recognition of our "selves" and our place in the world, a state known as consciousness.

One example of a sci-fi struggle to define AI consciousness is AMC's "Humans" (Tues. 10/9c starting June 5). At this point in the series, human-like machines called Synths have become self-aware; as they band together in communities to live independent lives and define who they are, they must also battle for acceptance and survival against the hostile humans who created and used them.

But what exactly might "consciousness" mean for artificial intelligence (AI) in the real world, and how close is AI to reaching that goal?

Philosophers have described consciousness as having a unique sense of self coupled with an awareness of what's going on around you. And neuroscientists have offered their own perspective on how consciousness might be quantified, through analysis of a person's brain activity as it integrates and interprets sensory data.

However, applying those rules to AI is tricky. In some ways, the processing abilities of AI are not unlike those that take place in human brains. Sophisticated AI systems use a process called deep learning to solve computational tasks quickly, using networks of layered algorithms that communicate with each other to solve more and more complex problems.

It's a strategy very similar to that of our own brains, where information speeds across connections between neurons. In a neural network, deep learning enables AI to teach itself how to identify disease, win a strategy game against the best human player in the world, or write a pop song.

But to accomplish these feats, any neural network still relies on a human programmer setting the tasks and selecting the data for it to learn from. Consciousness for AI would mean that neural networks could make those initial choices themselves, "deviating from the programmers' intentions and doing their own thing," Edith Elkind, a professor of computing science at the University of Oxford in the U.K., told Live Science in an email.

"Machines will become conscious when they start to set their own goals and act according to these goals rather than do what they were programmed to do," Elkind said.

"This is different from autonomy: Even a fully autonomous car would still drive from A to B as told," she added.

Three stages of consciousness

One of the pitfalls for machines becoming self-aware is that consciousness in humans is not well-defined enough, which would make it difficult if not impossible for programmers to replicate such a state in algorithms for AI, researchers reported in a study published in October 2017 in the journal Science.

The scientists defined three levels of human consciousness, based on the computation that happens in the brain. The first, which they labeled "C0," represents calculations that happen without our knowledge, such as during facial recognition, and most AI functions at this level, the scientists wrote in the study.

The second level, "C1," involves a so-called "global" awareness of information — in other words, actively sifting and evaluating quantities of data to make an informed, deliberate choice in response to specific circumstances.

Self-awareness emerges in the third level, "C2," in which individuals recognize and correct mistakes and investigate the unknown, the study authors reported.

"Once we can spell out in computational terms what the differences may be in humans between conscious and unconsciousness, coding that into computers may not be that hard," study co-author Hakwan Lau, a UCLA neuroscientist, previously told Live Science.

To a certain extent, some types of AI can evaluate their actions and correct them responsively — a component of the C2 level of human consciousness. But don't expect to meet self-aware AI anytime soon, Elkind said in the email.

"While we are quite close to having machines that can operate autonomously (self-driving cars, robots that can explore an unknown terrain, etc.), we are very far from having conscious machines," Elkind said.

So, for now, if you want to see "conscious" AI in action, you can watch the Synths vie for their rights in "Humans." The third season debuts June 5 at 10/9c.

www.livescience.com/62656-when-will-ai-be-conscious.html

|

|

|

|

Post by HAL on May 25, 2018 18:28:39 GMT

"While we are quite close to having machines that can operate autonomously (self-driving cars, robots that can explore an unknown terrain, etc....

Uber has cancelled it's self drive car project in Arizona due to one of it's cars killing a pedestrian. But it is going to start the trials again in Pittsburgh PA.

Does this mean the people of Pittsburgh are more expendable than the people of Arizona ?

HAL

|

|

|

|

Post by GhostofEd on May 26, 2018 0:50:59 GMT

Does this mean the people of Pittsburgh are more expendable than the people of Arizona ? HAL No. It probably means someone thinks those who live in Pittsburgh are smarter vs. those who live in Arizona thus less chance of that type of accident happening. |

|

|

|

Post by HAL on May 26, 2018 19:59:06 GMT

I thought that the car was supposed to be the smart one. 'Driver-less cars will reduce the accidents caused by human error' etc.

Ring ring.

'Hello, Pittsburgh Mayor here'.

'Police chief here Mr Mayor. I have bad news and good news for you. The bad news is that I have to inform you that an Uber driver-less car has just killed a pedestrian'.

'Oh my God, what's the good news ?'

'She was a tourist from Arizona'.

HAL

|

|

|

|

Post by swamprat on May 30, 2018 15:27:59 GMT

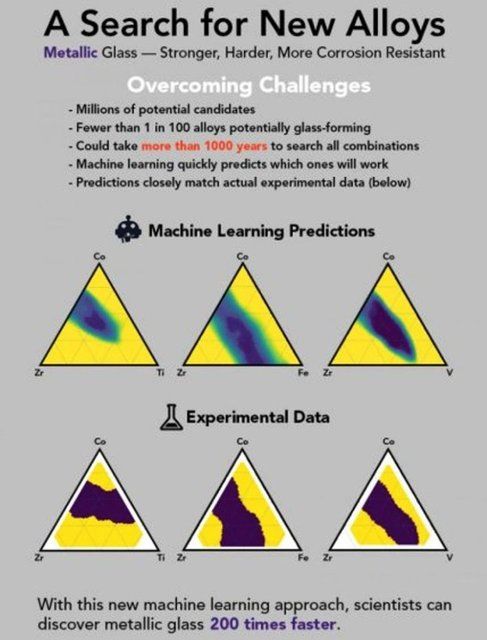

Noninvasive technique to correct vision

Date: May 29, 2018

Source: Columbia University School of Engineering and Applied Science

Summary:

Engineers have developed a noninvasive approach to permanently correct vision that shows great promise in preclinical models. The method uses a femtosecond oscillator for selective and localized alteration of the biochemical and biomechanical properties of corneal tissue. The technique, which changes the tissue's macroscopic geometry, is non-surgical and has fewer side effects and limitations than those seen in refractive surgeries. The study could lead to treatment for myopia, hyperopia, astigmatism, and irregular astigmatism.

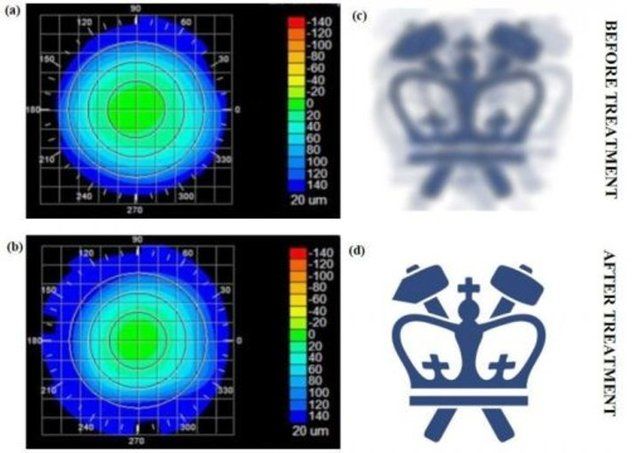

Corneal topography before and after the treatment, paired with virtual vision that simulates effects of induced refractive power change. Credit: Sinisa Vukelic/Columbia Engineering

Nearsightedness, or myopia, is an increasing problem around the world. There are now twice as many people in the US and Europe with this condition as there were 50 years ago. In East Asia, 70 to 90 percent of teenagers and young adults are nearsighted. By some estimates, about 2.5 billion of people across the globe may be affected by myopia by 2020.

Eye glasses and contact lenses are simple solutions; a more permanent one is corneal refractive surgery. But, while vision correction surgery has a relatively high success rate, it is an invasive procedure, subject to post-surgical complications, and in rare cases permanent vision loss. In addition, laser-assisted vision correction surgeries such as laser in situ keratomileusis (LASIK) and photorefractive keratectomy (PRK) still use ablative technology, which can thin and in some cases weaken the cornea.

Columbia Engineering researcher Sinisa Vukelic has developed a new non-invasive approach to permanently correct vision that shows great promise in preclinical models. His method uses a femtosecond oscillator, an ultrafast laser that delivers pulses of very low energy at high repetition rate, for selective and localized alteration of the biochemical and biomechanical properties of corneal tissue. The technique, which changes the tissue's macroscopic geometry, is non-surgical and has fewer side effects and limitations than those seen in refractive surgeries. For instance, patients with thin corneas, dry eyes, and other abnormalities cannot undergo refractive surgery. The study, which could lead to treatment for myopia, hyperopia, astigmatism, and irregular astigmatism, was published May 14 in Nature Photonics.

"We think our study is the first to use this laser output regimen for noninvasive change of corneal curvature or treatment of other clinical problems," says Vukelic, who is a lecturer in discipline in the department of mechanical engineering. His method uses a femtosecond oscillator to alter biochemical and biomechanical properties of collagenous tissue without causing cellular damage and tissue disruption. The technique allows for enough power to induce a low-density plasma within the set focal volume but does not convey enough energy to cause damage to the tissue within the treatment region.

"We've seen low-density plasma in multi-photo imaging where it's been considered an undesired side-effect," Vukelic says. "We were able to transform this side-effect into a viable treatment for enhancing the mechanical properties of collagenous tissues."

The critical component to Vukelic's approach is that the induction of low-density plasma causes ionization of water molecules within the cornea. This ionization creates a reactive oxygen species, (a type of unstable molecule that contains oxygen and that easily reacts with other molecules in a cell), which in turn interacts with the collagen fibrils to form chemical bonds, or crosslinks. The selective introduction of these crosslinks induces changes in the mechanical properties of the treated corneal tissue.

When his technique is applied to corneal tissue, the crosslinking alters the collagen properties in the treated regions, and this ultimately results in changes in the overall macrostructure of the cornea. The treatment ionizes the target molecules within the cornea while avoiding optical breakdown of the corneal tissue. Because the process is photochemical, it does not disrupt tissue and the induced changes remain stable.

"If we carefully tailor these changes, we can adjust the corneal curvature and thus change the refractive power of the eye," says Vukelic. "This is a fundamental departure from the mainstream ultrafast laser treatment that is currently applied in both research and clinical settings and relies on the optical breakdown of the target materials and subsequent cavitation bubble formation."

"Refractive surgery has been around for many years, and although it is a mature technology, the field has been searching for a viable, less invasive alternative for a long time," says Leejee H. Suh, Miranda Wong Tang Associate Professor of Ophthalmology at the Columbia University Medical Center, who was not involved with the study. "Vukelic's next-generation modality shows great promise. This could be a major advance in treating a much larger global population and address the myopia pandemic."

Vukelic's group is currently building a clinical prototype and plans to start clinical trials by the end of the year. He is also looking to develop a way to predict corneal behavior as a function of laser irradiation, how the cornea might deform if a small circle or an ellipse, for example, were treated. If researchers know how the cornea will behave, they will be able to personalize the treatment -- they could scan a patient's cornea and then use Vukelic's algorithm to make patient-specific changes to improve his/her vision.

"What's especially exciting is that our technique is not limited to ocular media -- it can be used on other collagen-rich tissues," Vukelic adds. "We've also been working with Professor Gerard Ateshian's lab to treat early osteoarthritis, and the preliminary results are very, very encouraging. We think our non-invasive approach has the potential to open avenues to treat or repair collagenous tissue without causing tissue damage."

________________________________________

Story Source:

Materials provided by Columbia University School of Engineering and Applied Science. Note: Content may be edited for style and length.

________________________________________

Journal Reference:

1. Chao Wang, Mikhail Fomovsky, Guanxiong Miao, Mariya Zyablitskaya, Sinisa Vukelic. Femtosecond laser crosslinking of the cornea for non-invasive vision correction. Nature Photonics, 2018; DOI: 10.1038/s41566-018-0174-8

|

|

|

|

Post by swamprat on May 30, 2018 15:31:27 GMT

And... Still a few years away from availability, but on the way:

First 3D-printed human corneas

Date: May 29, 2018

Source: Newcastle University

Summary:

The first human corneas have been 3D printed by scientists at Newcastle University, UK.

It means the technique could be used in the future to ensure an unlimited supply of corneas.

As the outermost layer of the human eye, the cornea has an important role in focusing vision.

Yet there is a significant shortage of corneas available to transplant, with 10 million people worldwide requiring surgery to prevent corneal blindness as a result of diseases such as trachoma, an infectious eye disorder.

In addition, almost 5 million people suffer total blindness due to corneal scarring caused by burns, lacerations, abrasion or disease.

The proof-of-concept research, published today in Experimental Eye Research, reports how stem cells (human corneal stromal cells) from a healthy donor cornea were mixed together with alginate and collagen to create a solution that could be printed, a 'bio-ink'.

Using a simple low-cost 3D bio-printer, the bio-ink was successfully extruded in concentric circles to form the shape of a human cornea. It took less than 10 minutes to print.

The stem cells were then shown to culture -- or grow.

Che Connon, Professor of Tissue Engineering at Newcastle University, who led the work, said: "Many teams across the world have been chasing the ideal bio-ink to make this process feasible.

"Our unique gel -- a combination of alginate and collagen -- keeps the stem cells alive whilst producing a material which is stiff enough to hold its shape but soft enough to be squeezed out the nozzle of a 3D printer.

"This builds upon our previous work in which we kept cells alive for weeks at room temperature within a similar hydrogel. Now we have a ready to use bio-ink containing stem cells allowing users to start printing tissues without having to worry about growing the cells separately."

The scientists, including first author and PhD student Ms Abigail Isaacson from the Institute of Genetic Medicine, Newcastle University, also demonstrated that they could build a cornea to match a patient's unique specifications.

The dimensions of the printed tissue were originally taken from an actual cornea. By scanning a patient's eye, they could use the data to rapidly print a cornea which matched the size and shape.

Professor Connon added: "Our 3D printed corneas will now have to undergo further testing and it will be several years before we could be in the position where we are using them for transplants.

"However, what we have shown is that it is feasible to print corneas using coordinates taken from a patient eye and that this approach has potential to combat the world-wide shortage."

Reference:

3D Bioprinting of a Corneal Stroma Equivalent. Abigail Isaacson, Stephen Swioklo, Che J. Connon. Experimental Eye Research.

Story Source:

Materials provided by Newcastle University. Note: Content may be edited for style and length.

Journal Reference:

1. Isaacson A, Swioklo S, Connon C. 3D bioprinting of a corneal stroma equivalent. Experimental Eye Research, 2018 DOI: 10.1016/j.exer.2018.05.010

|

|

|

|

Post by swamprat on May 31, 2018 14:21:20 GMT

Time for another headache..... Shut up! I'm THINKING!  The Standard Model theory of particle physics The Standard Model theory of particle physics

By EarthSky Voices in HUMAN WORLD | May 31, 2018

“What a dull name for the most accurate scientific theory known to human beings … As a theoretical physicist, I’d prefer ‘The Absolutely Amazing Theory of Almost Everything’.”

How does our world work on a subatomic level? Image via Varsha Y S.

By Glenn Starkman, Case Western Reserve University

The Standard Model. What dull name for the most accurate scientific theory known to human beings.

More than a quarter of the Nobel Prizes in physics of the last century are direct inputs to or direct results of the Standard Model. Yet its name suggests that if you can afford a few extra dollars a month you should buy the upgrade. As a theoretical physicist, I’d prefer The Absolutely Amazing Theory of Almost Everything. That’s what the Standard Model really is.

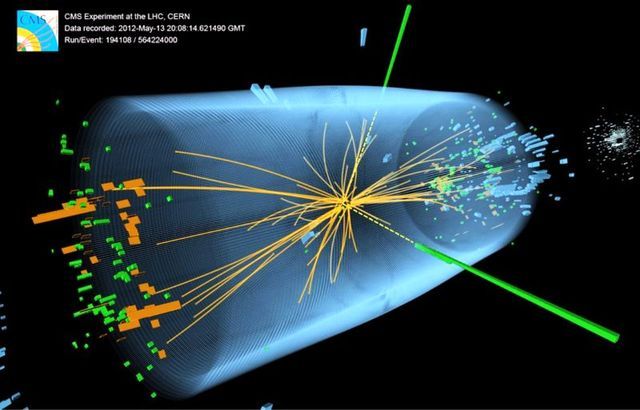

Many recall the excitement among scientists and media over the 2012 discovery of the Higgs boson. But that much-ballyhooed event didn’t come out of the blue – it capped a five-decade undefeated streak for the Standard Model. Every fundamental force but gravity is included in it. Every attempt to overturn it to demonstrate in the laboratory that it must be substantially reworked – and there have been many over the past 50 years – has failed.

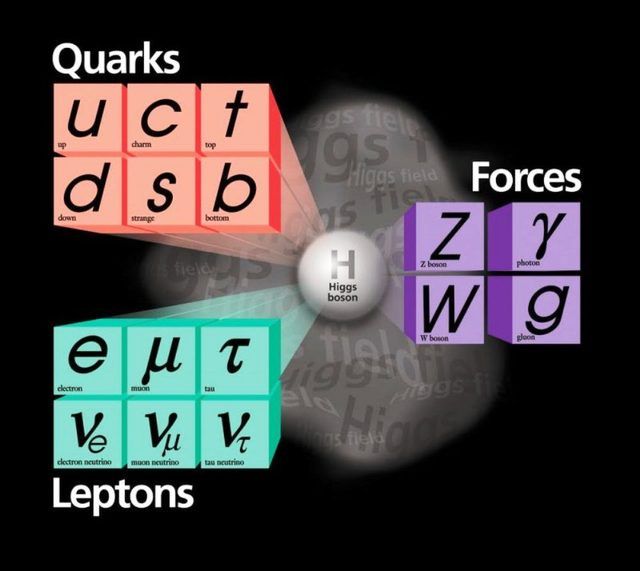

In short, the Standard Model answers this question: What is everything made of, and how does it hold together?

The smallest building blocks

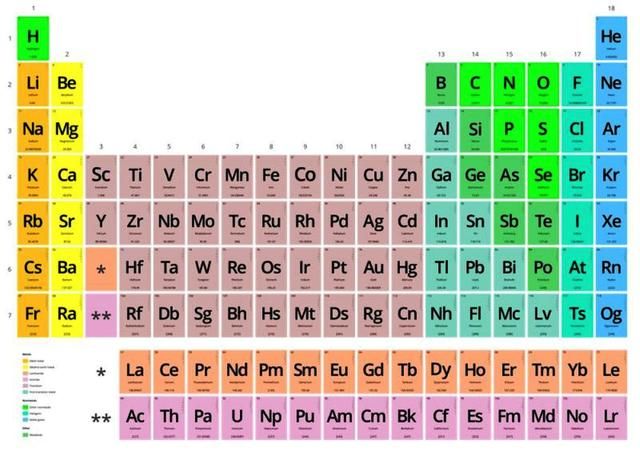

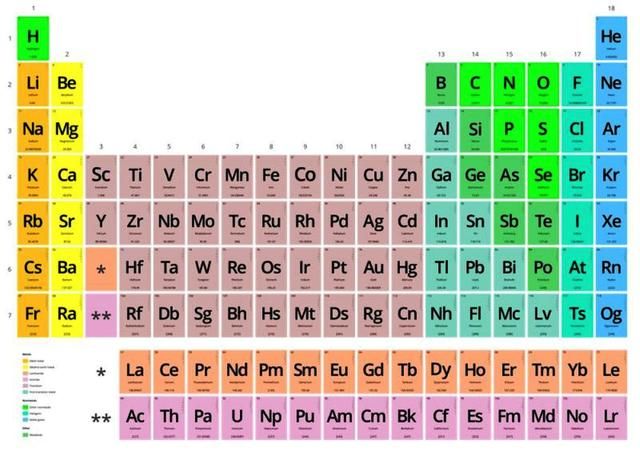

You know, of course, that the world around us is made of molecules, and molecules are made of atoms. Chemist Dmitri Mendeleev figured that out in the 1860s and organized all atoms – that is, the elements – into the periodic table that you probably studied in middle school. But there are 118 different chemical elements. There’s antimony, arsenic, aluminum, selenium … and 114 more.

But these elements can be broken down further. Image via Rubén Vera Koster.

Physicists like things simple. We want to boil things down to their essence, a few basic building blocks. Over a hundred chemical elements is not simple. The ancients believed that everything is made of just five elements – earth, water, fire, air and aether. Five is much simpler than 118. It’s also wrong.

By 1932, scientists knew that all those atoms are made of just three particles – neutrons, protons and electrons. The neutrons and protons are bound together tightly into the nucleus. The electrons, thousands of times lighter, whirl around the nucleus at speeds approaching that of light. Physicists Planck, Bohr, Schroedinger, Heisenberg and friends had invented a new science – quantum mechanics – to explain this motion.

That would have been a satisfying place to stop. Just three particles. Three is even simpler than five. But held together how? The negatively charged electrons and positively charged protons are bound together by electromagnetism. But the protons are all huddled together in the nucleus and their positive charges should be pushing them powerfully apart. The neutral neutrons can’t help.

What binds these protons and neutrons together? “Divine intervention” a man on a Toronto street corner told me; he had a pamphlet, I could read all about it. But this scenario seemed like a lot of trouble even for a divine being – keeping tabs on every single one of the universe’s 1080 protons and neutrons and bending them to its will.

Expanding the zoo of particles

Meanwhile, nature cruelly declined to keep its zoo of particles to just three. Really four, because we should count the photon, the particle of light that Einstein described. Four grew to five when Anderson measured electrons with positive charge – positrons – striking the Earth from outer space. At least Dirac had predicted these first anti-matter particles. Five became six when the pion, which Yukawa predicted would hold the nucleus together, was found.

Then came the muon – 200 times heavier than the electron, but otherwise a twin. “Who ordered that?” I. I. Rabi quipped. That sums it up. Number seven. Not only not simple, redundant.

By the 1960s there were hundreds of “fundamental” particles. In place of the well-organized periodic table, there were just long lists of baryons (heavy particles like protons and neutrons), mesons (like Yukawa’s pions) and leptons (light particles like the electron, and the elusive neutrinos) – with no organization and no guiding principles.

Into this breach sidled the Standard Model. It was not an overnight flash of brilliance. No Archimedes leapt out of a bathtub shouting “eureka.” Instead, there was a series of crucial insights by a few key individuals in the mid-1960s that transformed this quagmire into a simple theory, and then five decades of experimental verification and theoretical elaboration.

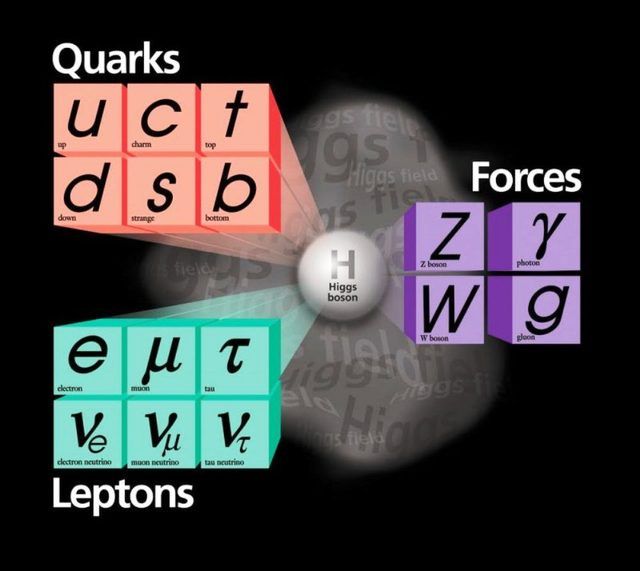

Quarks. They come in six varieties we call flavors. Like ice cream, except not as tasty. Instead of vanilla, chocolate and so on, we have up, down, strange, charm, bottom and top. In 1964, Gell-Mann and Zweig taught us the recipes: Mix and match any three quarks to get a baryon. Protons are two ups and a down quark bound together; neutrons are two downs and an up. Choose one quark and one antiquark to get a meson. A pion is an up or a down quark bound to an anti-up or an anti-down. All the material of our daily lives is made of just up and down quarks and anti-quarks and electrons.

The Standard Model of elementary particles provides an ingredients list for everything around us. Image via Fermi National Accelerator Laboratory.

Simple. Well, simple-ish, because keeping those quarks bound is a feat. They are tied to one another so tightly that you never ever find a quark or anti-quark on its own. The theory of that binding, and the particles called gluons (chuckle) that are responsible, is called quantum chromodynamics. It’s a vital piece of the Standard Model, but mathematically difficult, even posing an unsolved problem of basic mathematics. We physicists do our best to calculate with it, but we’re still learning how.

The other aspect of the Standard Model is “A Model of Leptons.” That’s the name of the landmark 1967 paper by Steven Weinberg that pulled together quantum mechanics with the vital pieces of knowledge of how particles interact and organized the two into a single theory. It incorporated the familiar electromagnetism, joined it with what physicists called “the weak force” that causes certain radioactive decays, and explained that they were different aspects of the same force. It incorporated the Higgs mechanism for giving mass to fundamental particles.

Since then, the Standard Model has predicted the results of experiment after experiment, including the discovery of several varieties of quarks and of the W and Z bosons – heavy particles that are for weak interactions what the photon is for electromagnetism. The possibility that neutrinos aren’t massless was overlooked in the 1960s, but slipped easily into the Standard Model in the 1990s, a few decades late to the party.

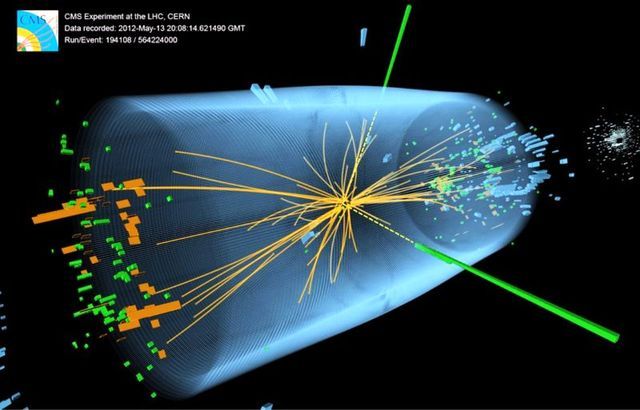

3D view of an event recorded at the CERN particle accelerator showing characteristics expected from the decay of the SM Higgs boson to a pair of photons (dashed yellow lines and green towers). Image via McCauley, Thomas; Taylor, Lucas; for the CMS Collaboration CERN.

Discovering the Higgs boson in 2012, long predicted by the Standard Model and long sought after, was a thrill but not a surprise. It was yet another crucial victory for the Standard Model over the dark forces that particle physicists have repeatedly warned loomed over the horizon. Concerned that the Standard Model didn’t adequately embody their expectations of simplicity, worried about its mathematical self-consistency, or looking ahead to the eventual necessity to bring the force of gravity into the fold, physicists have made numerous proposals for theories beyond the Standard Model. These bear exciting names like Grand Unified Theories, Supersymmetry, Technicolor, and String Theory.

Sadly, at least for their proponents, beyond-the-Standard-Model theories have not yet successfully predicted any new experimental phenomenon or any experimental discrepancy with the Standard Model.

After five decades, far from requiring an upgrade, the Standard Model is worthy of celebration as the Absolutely Amazing Theory of Almost Everything.

Bottom line: What is The Standard Model theory of particle physics?

earthsky.org/human-world/standard-model-of-particle-physics

|

|

|

|

Post by HAL on May 31, 2018 20:23:43 GMT

..“What a dull name for the most accurate scientific theory known to human beings … As a theoretical physicist, I’d prefer ‘The Absolutely Amazing Theory of Almost Everything’.”

Odd then that many eminent particle physicists appear to consider the standard model a kludge.

A theory with bits bolted on to it every time a problem with it arose.

The problem seems to be that they have more or less reached the limits of experimental research into this area. And the predictions are moving further ahead of the proof.

They need to back up a bit and actually test some of this stuff in the real world.

Maybe do some practical stuff like setting up a radio observatory on the dark side of the Moon.

HAL

|

|

|

|

Post by swamprat on Jun 1, 2018 2:24:46 GMT

Surgical technique improves sensation, control of prosthetic limb

New study describes the first human implementation of novel approach to limb amputation

Date: May 30, 2018

Source: Brigham and Women's Hospital

Summary:

Researchers have invented a new neural interface and communication paradigm that is able to send movement commands from the central nervous system to a robotic prosthesis, and relay proprioceptive feedback describing movement of the joint back to the central nervous system in return.

Humans can accurately sense the position, speed and torque of their limbs, even with their eyes shut. This sense, known as proprioception, allows humans to precisely control their body movements. Despite significant improvements to prosthetic devices in recent years, researchers have been unable to provide this essential sensation to people with artificial limbs, limiting their ability to accurately control their movements. Researchers at the Center for Extreme Bionics at the MIT Media Lab have invented a new neural interface and communication paradigm that is able to send movement commands from the central nervous system to a robotic prosthesis, and relay proprioceptive feedback describing movement of the joint back to the central nervous system in return. This new paradigm, known as the agonist-antagonist myoneural interface (AMI), involves a novel surgical approach to limb amputation in which dynamic muscle relationships are preserved within the amputated limb. The AMI was validated in extensive pre-clinical experimentation at MIT, prior to its first surgical implementation in a human patient at Brigham and Women's Faulkner Hospital.

In a paper published today in Science Translational Medicine, the researchers describe the first human implementation of the agonist-antagonist myoneural interface (AMI), in a person with below-knee amputation. The paper represents the first time information on joint position, speed and torque has been fed from a prosthetic limb into the nervous system, according to senior author and project director Hugh Herr, a professor of media arts and sciences at the MIT Media Lab. "Our goal is to close the loop between the peripheral nervous system's muscles and nerves, and the bionic appendage," says Herr.

To do this, the researchers used the same biological sensors that create the body's natural proprioceptive sensations. The AMI consists of two opposing muscle-tendons, known as an agonist and an antagonist, which are surgically connected in series so that when one muscle contracts and shortens -- upon either volitional or electrical activation -- the other stretches, and vice versa. This coupled movement enables natural biological sensors within the muscle-tendon to transmit electrical signals to the central nervous system, communicating muscle length, speed and force information, which is interpreted by the brain as natural joint proprioception. This is how muscle-tendon proprioception works naturally in human joints, Herr says. "Because the muscles have a natural nerve supply, when this agonist-antagonist muscle movement occurs information is sent through the nerve to the brain, enabling the person to feel those muscles moving, both their position, speed and load," he says. By connecting the AMI with electrodes, the researchers can detect electrical pulses from the muscle, or apply electricity to the muscle to cause it to contract. "When a person is thinking about moving their phantom ankle, the AMI that maps to that bionic ankle is moving back and forth, sending signals through the nerves to the brain, enabling the person with an amputation to actually feel their bionic ankle moving throughout the whole angular range," Herr says.

Decoding the electrical language of proprioception within nerves is extremely difficult, according to Tyler Clites, first author of the paper and graduate student lead on the project. "Using this approach, rather than needing to speak that electrical language ourselves, we use these biological sensors to speak the language for us," Clites says. "These sensors translate mechanical stretch into electrical signals that can be interpreted by the brain as sensations of position, speed and force."

The AMI was first implemented surgically in a human patient at Brigham and Women's Faulkner Hospital, Boston, by Matthew J Carty, MD, one of the paper's authors, a surgeon in the Division of Plastic and Reconstructive Surgery and an MIT research scientist. In this operation, two AMIs were constructed in the residual limb at the time of primary below-knee amputation, with one AMI to control the prosthetic ankle joint, and the other to control the prosthetic subtalar joint.

"We knew that in order for us to validate the success of this new approach to amputation, we would need to couple the procedure with a novel prosthesis that could take advantage of the additional capabilities of this new type of residual limb," Carty says. "Collaboration was critical, as the design of the procedure informed the design of the robotic limb, and vice versa." Towards this end, an advanced prosthetic limb was built at MIT and electrically linked to the patient's peripheral nervous system using electrodes placed over each AMI muscle following the amputation surgery. The researchers then compared the movement of the AMI patient with that of four people who had undergone a traditional below-knee amputation procedure, using the same advanced prosthetic limb. They found that the AMI patient had more stable control over movement of the prosthetic device, and was able to move more efficiently than those with the conventional amputation. They also found that the AMI patient quickly displayed natural, reflexive behaviors such as extending the toes towards the next step when walking down a set of stairs.

These behaviors are essential to natural human movement, and were absent in all of the people who had undergone a traditional amputation. What's more, while the patients with conventional amputation reported feeling disconnected to the prosthesis, the AMI patient quickly described feeling that the bionic ankle and foot had become a part of their own body. "This is pretty significant evidence that the brain and the spinal cord in this patient adopted the prosthetic leg as if it were his biological limb, enabling those biological pathways to become active once again," Clites says. "We believe proprioception is fundamental to that adoption."

It is difficult for an individual with a lower limb amputation to gain a sense of embodiment with their artificial limb, according to Daniel Ferris, the Robert W. Adenbaum Professor of Engineering Innovation at the University of Florida, who was not involved in the research. "This is ground breaking. The increased sense of embodiment by the amputee subject is a powerful result of having better control of and feedback from the bionic limb," Ferris says. "I expect that we will see individuals with traumatic amputations start to seek out this type of surgery and interface for their prostheses -- it could provide a much greater quality of life for amputees."

The researchers have since carried out the AMI procedure on nine other below-knee amputees, and are planning to adapt the technique for those needing above-knee, below-elbow and above-elbow amputations.

"Previously humans have used technology in a tool-like fashion," Herr says. "We are now starting to see a new era of human-device interaction, of full neurological embodiment, in which what we design becomes truly part of us, part of our identity."

The current study has its roots in a research project that received initial funding in 2014, when Carty was selected as the winner of the inaugural Stepping Strong Innovator Awards granted by The Gillian Reny Stepping Strong Center for Trauma Innovation. The awards and center were established by a family that survived the Boston Marathon bombings and committed to support ground breaking projects in innovative trauma research and care. This support allowed the team to quickly focus on all of the foundational work that was necessary to prepare in advance of taking this innovation to the operating room.

Funding for this work was also provided by MIT Media Lab Consortia and a generous gift from Google, Inc. Prosthetic design and fabrication funded in part by US Army MRMC (W81XWH-14-C-0111).

Story Source:

Materials provided by Brigham and Women's Hospital. Note: Content may be edited for style and length.

Journal Reference:

Tyler R. Clites, Matthew J. Carty, Jessica B. Ullauri, Matthew E. Carney, Luke M. Mooney, Jean-François Duval, Shriya S. Srinivasan, Hugh. M. Herr. Proprioception from a neurally controlled lower-extremity prosthesis. Science Translational Medicine, 2018; 10 (443): eaap8373 DOI: 10.1126/scitranslmed.aap8373

|

|

|

|

Post by swamprat on Jun 1, 2018 2:26:52 GMT

Meanwhile, on the other end of the spectrum.....:

Cometh the cyborg: Improved integration of living muscles into robots

Date: May 30, 2018

Source: Institute of Industrial Science, The University of Tokyo

Summary:

Researchers have developed a novel method of growing whole muscles from hydrogel sheets impregnated with myoblasts. They then incorporated these muscles as antagonistic pairs into a biohybrid robot, which successfully performed manipulations of objects. This approach overcame earlier limitations of a short functional life of the muscles and their ability to exert only a weak force, paving the way for more advanced biohybrid robots.

The new field of biohybrid robotics involves the use of living tissue within robots, rather than just metal and plastic. Muscle is one potential key component of such robots, providing the driving force for movement and function. However, in efforts to integrate living muscle into these machines, there have been problems with the force these muscles can exert and the amount of time before they start to shrink and lose their function.

Now, in a study reported in the journal Science Robotics, researchers at The University of Tokyo Institute of Industrial Science have overcome these problems by developing a new method that progresses from individual muscle precursor cells, to muscle-cell-filled sheets, and then to fully functioning skeletal muscle tissues. They incorporated these muscles into a biohybrid robot as antagonistic pairs mimicking those in the body to achieve remarkable robot movement and continued muscle function for over a week.

The team first constructed a robot skeleton on which to install the pair of functioning muscles. This included a rotatable joint, anchors where the muscles could attach, and electrodes to provide the stimulus to induce muscle contraction. For the living muscle part of the robot, rather than extract and use a muscle that had fully formed in the body, the team built one from scratch. For this, they used hydrogel sheets containing muscle precursor cells called myoblasts, holes to attach these sheets to the robot skeleton anchors, and stripes to encourage the muscle fibers to form in an aligned manner.

"Once we had built the muscles, we successfully used them as antagonistic pairs in the robot, with one contracting and the other expanding, just like in the body," study corresponding author Shoji Takeuchi says. "The fact that they were exerting opposing forces on each other stopped them shrinking and deteriorating, like in previous studies."

The team also tested the robots in different applications, including having one pick up and place a ring, and having two robots work in unison to pick up a square frame. The results showed that the robots could perform these tasks well, with activation of the muscles leading to flexing of a finger-like protuberance at the end of the robot by around 90°.

"Our findings show that, using this antagonistic arrangement of muscles, these robots can mimic the actions of a human finger," lead author Yuya Morimoto says. "If we can combine more of these muscles into a single device, we should be able to reproduce the complex muscular interplay that allow hands, arms, and other parts of the body to function."

Story Source:

Materials provided by Institute of Industrial Science, The University of Tokyo. Note: Content may be edited for style and length.

Journal Reference:

Yuya Morimoto, Hiroaki Onoe, Shoji Takeuchi. Biohybrid robot powered by an antagonistic pair of skeletal muscle tissues. Science Robotics, 2018; 3 (18): eaat4440 DOI: 10.1126/scirobotics.aat4440

|

|

|

|

Post by swamprat on Jun 2, 2018 2:36:44 GMT

A Major Physics Experiment Just Detected A Particle That Shouldn't Exist

By Rafi Letzter, Staff Writer | June 1, 2018 04:49pm ET

Scientists have produced the firmest evidence yet of so-called sterile neutrinos, mysterious particles that pass through matter without interacting with it at all.

The first hints these elusive particles turned up decades ago. But after years of dedicated searches, scientists have been unable to find any other evidence for them, with many experiments contradicting those old results. These new results now leave scientists with two robust experiments that seem to demonstrate the existence of sterile neutrinos, even as other experiments continue to suggest sterile neutrinos don't exist at all.

That means there's something strange happening in the universe that is making humanity's most cutting-edge physics experiments contradict one another.

Sterile neutrinos

Back in the mid-1990s, the Liquid Scintillator Neutrino Detector (LSND), an experiment at Los Alamos National Laboratory in New Mexico, found evidence of a mysterious new particle: a "sterile neutrino" that passes through matter without interacting with it. But that result couldn't be replicated; other experiments simply couldn't find any trace of the hidden particle. So the result was set aside.

Now, MiniBooNE — a follow-up experiment at Fermi National Accelerator Laboratory (Fermilab), located near Chicago — has picked up the hidden particle's scent again. A new paper posted to the preprint server arXiv offers such a compelling enough the missing neutrino to make physicists sit up and notice.

If MiniBooNE's new results hold up, "That would be huge; that's beyond the standard model; that would require new particles ... and an all-new analytical framework," said Kate Scholberg, a particle physicist at Duke University who was not involved in the experiment.

The Standard Model of physics has dominated scientists' understanding of the universe for more than half a century. It amounts to a list of particles that, together, go a long way toward explaining how matter and energy interact in the cosmos. Some of these particles, like quarks and electrons, are pretty easy to imagine: They're the building blocks of the atoms that make up everything we'll ever touch with our hands. Others, like the three known neutrinos, are more abstract: They're high-energy particles that stream through the universe, barely interacting with other matter. Billions of neutrinos from the sun pass through the tip of your finger every second, but they're overwhelmingly unlikely to have any impact on the particles of your body.

Electron, muon and tau neutrinos — the three known "flavors" — do interact with matter, though, through both the weak force (one of the four fundamental forces of the universe) and gravity. (Their antimatter twins sometimes interact with matter as well.) That means specialized detectors can find them, streaming down from the sun as well as from certain human sources, such as nuclear reactions. But the LSND experiment, Scholberg told Live Science, provided the first firm evidence that what humans could detect might not be the full picture.

As waves of neutrinos stream through space, they periodically "oscillate," jumping back and forth between one flavor and another, she explained. Both LSND and MiniBooNE involve firing beams of neutrinos at a detector hidden behind an insulator to block out all other radiation. (In LSND, the insulator was water; in MiniBooNE, it's a vat of oil.) And they carefully count how many neutrinos of each type strike the detector.

Both experiments have now reported more neutrino detections than The Standard Model's description of neutrino oscillation can explain the authors wrote in the paper. That suggests, they wrote, that the neutrinos are oscillating into hidden, heavier, "sterile" neutrinos that the detector can't directly detect before oscillating back into the detectable realm. The MiniBooNE result had a standard deviation measured at 4.8 sigma, just shy of the 5.0 threshold physicists look for. (A 5-sigma result has 1-in-3.5-million odds of being the result of random fluctuations in the data.) The researchers wrote that MiniBooNE and LSND combined represent a 6.1-sigma result (meaning more than one-in-500 million odds of being a fluke), though some researchers expressed a degree of skepticism about that claim.

If LSND and MiniBooNE were the only neutrino experiments on Earth, Scholberg said, that would be the end of the matter. The Standard Model would be updated to include some sort of sterile neutrino.

But there's a problem. Other major neutrino experiments, like the underground Oscillation Project with Emulsion-Tracking Apparatus experiment in Switzerland, haven't found the anomaly that both LSND and MiniBooNE have now seen.

As recently as 2017, after the IceCube Neutrino Observatory in Antarctica failed to turn up evidence for sterile neutrinos, researchers made the case to Live Science that another reported signal of the particles — missing antineutrinos around nuclear reactors — had been a mistake, and was actually the result of bad calculations.

Sterile neutrinos weren't a rejected idea, Scholberg said, but they weren't accepted science.

The MiniBooNE result complicates the particle picture.

"There are people who doubt the result," she said, "but there's no reason to think there's anything wrong [with the experiment itself]."

It's possible, she said, that the anomaly in the LSND and MiniBooNE experiments might turn out to be the "systematics," meaning there's something about the way neutrinos are interacting with the experimental setup that scientists don't yet understand. But it's also looking more and more possible that scientists are going to have to explain why so many other experiments aren't spotting very real sterile neutrinos that are turning up in Fermilab and Los Alamos Lab. And if that's the case, they'll have to revise their entire understanding of the universe in the process.

www.livescience.com/62721-sterile-neutrino-detected-fermilab.html

|

|

|

|

Post by supersidufo on Jun 2, 2018 8:14:27 GMT

Elon Musk: ‘Mark my words — A.I. is far more dangerous than nukes’

Catherine Clifford | May 17, 2018

Tesla and SpaceX boss Elon Musk has doubled down on his dire warnings about the danger of artificial intelligence.

The billionaire tech entrepreneur called AI more dangerous than nuclear warheads and said there needs to be a regulatory body overseeing the development of super intelligence, speaking at the South by Southwest tech conference in Austin, Texas on Sunday.

It is not the first time Musk has made frightening predictions about the potential of artificial intelligence — he has, for example, called AI vastly more dangerous than North Korea — and he has previously called for regulatory oversight.

Some have called his tough talk fear-mongering. Facebook founder Mark Zuckerberg said Musk’s doomsday AI scenarios are unnecessary and “pretty irresponsible.” And Harvard professor Steven Pinker also recently criticized Musk’s tactics.

Musk, however, is resolute, calling those who push against his warnings “fools” at SXSW.

“The biggest issue I see with so-called AI experts is that they think they know more than they do, and they think they are smarter than they actually are,” said Musk. “This tends to plague smart people. They define themselves by their intelligence and they don’t like the idea that a machine could be way smarter than them, so they discount the idea — which is fundamentally flawed.”

Based on his knowledge of machine intelligence and its developments, Musk believes there is reason to be worried.

“I am really quite close, I am very close, to the cutting edge in AI and it scares the hell out of me,” said Musk. “It’s capable of vastly more than almost anyone knows and the rate of improvement is exponential.”

Musk pointed to machine intelligence playing the ancient Chinese strategy game Go to demonstrate rapid growth in AI’s capabilities. For example, London-based company, DeepMind, which was acquired by Google in 2014, developed an artificial intelligence system, AlphaGo Zero, that learned to play Go without any human intervention. It learned simply from randomized play against itself. The Alphabet-owned company announced this development in a paper published in October.

Musk worries AI’s development will outpace our ability to manage it in a safe way.

“So the rate of improvement is really dramatic. We have to figure out some way to ensure that the advent of digital super intelligence is one which is symbiotic with humanity. I think that is the single biggest existential crisis that we face and the most pressing one.”

To do this, Musk recommended the development of artificial intelligence be regulated.

“I am not normally an advocate of regulation and oversight — I think one should generally err on the side of minimizing those things — but this is a case where you have a very serious danger to the public,” said Musk.

“It needs to be a public body that has insight and then oversight to confirm that everyone is developing AI safely. This is extremely important. I think the danger of AI is much greater than the danger of nuclear warheads by a lot and nobody would suggest that we allow anyone to build nuclear warheads if they want. That would be insane,” he said at SXSW.

“And mark my words, AI is far more dangerous than nukes. Far. So why do we have no regulatory oversight? This is insane.”

Musk called for regulatory oversight of artificial intelligence in July too, speaking to the National Governors Association. “AI is a rare case where I think we need to be proactive in regulation than be reactive,” Musk said in July.

Elon Musk issues yet another warning against runaway artificial intelligence

In his analysis of the dangers of AI, Musk differentiates between case-specific applications of machine intelligence like self-driving cars and general machine intelligence, which he has described previously as having “an open-ended utility function” and having a “million times more compute power” than case-specific AI.

“I am not really all that worried about the short term stuff. Narrow AI is not a species-level risk. It will result in dislocation, in lost jobs,and better weaponry and that kind of thing, but it is not a fundamental species level risk, whereas digital super intelligence is,” explained Musk.

“So it is really all about laying the groundwork to make sure that if humanity collectively decides that creating digital super intelligence is the right move, then we should do so very very carefully — very very carefully. This is the most important thing that we could possibly do.”

Still, Musk is in the business of artificial intelligence with his venture Neuralink, a company working to create a way to connect the brain with machine intelligence.

Musk hopes “that we are able to achieve a symbiosis” with artificial intelligence: “We do want a close coupling between collective human intelligence and digital intelligence, and Neuralink is trying to help in that regard by trying creating a high bandwidth interface between AI and the human brain,” he said.

edu.proarch.top/?p=283

|

|

|

|

Post by HAL on Jun 3, 2018 20:10:00 GMT

Swamp,

If these things do not react with anything, how are they detected ?

HAL.

Who is pulling his workshop apart looking for two good nine Volt batteries to power his Very High Impedance Buffer so he can buffer his 1 Meg Ohm DVM to stop it interfering with a circuit reading.

|

|